LLaVA

source link: https://llava-vl.github.io/

Go to the source link to view the article. You can view the picture content, updated content and better typesetting reading experience. If the link is broken, please click the button below to view the snapshot at that time.

LLaVA

Visual Instruction Tuning

LLaVA: Large Language and Vision Assistant

LLaVA represents a novel end-to-end trained large multimodal model that combines a vision encoder and Vicuna for general-purpose visual and language understanding, achieving impressive chat capabilities mimicking spirits of the multimodal GPT-4 and setting a new state-of-the-art accuracy on Science QA.

Loading...

Abstract

Instruction tuning large language models (LLMs) using machine-generated instruction-following data has improved zero-shot capabilities on new tasks in the language domain, but the idea is less explored in the multimodal field.

- Multimodal Instruct Data. We present the first attempt to use language-only GPT-4 to generate multimodal language-image instruction-following data.

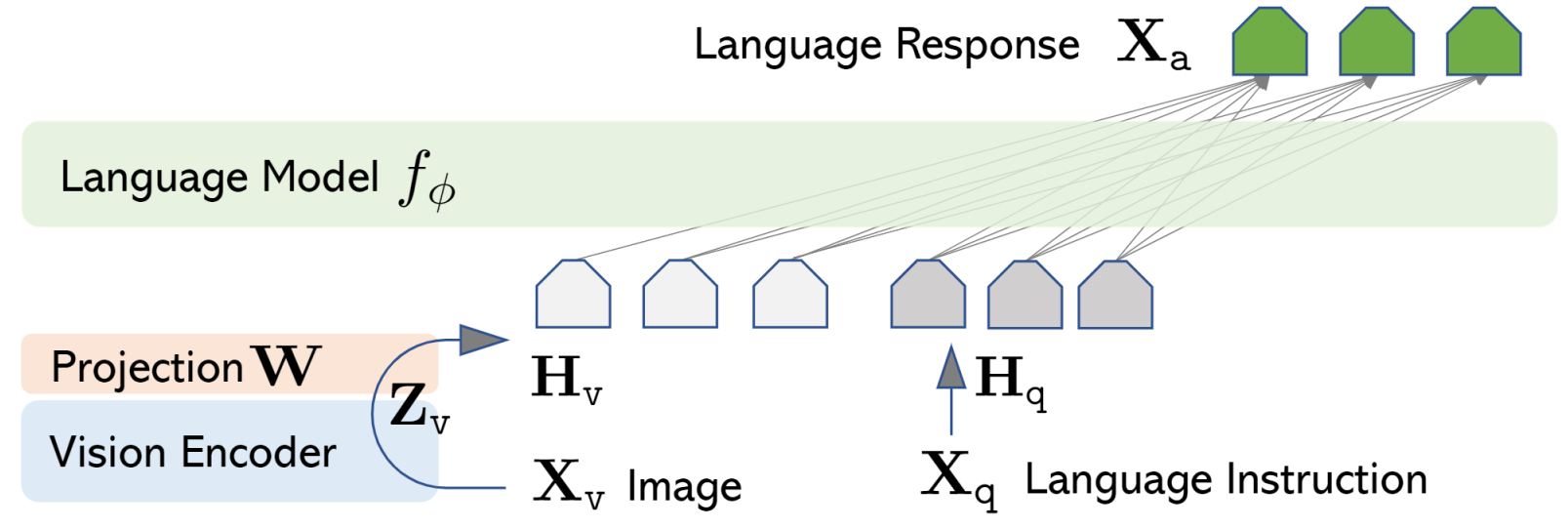

- LLaVA Model. We introduce LLaVA (Large Language-and-Vision Assistant), an end-to-end trained large multimodal model that connects a vision encoder and LLM for general-purpose visual and language understanding.

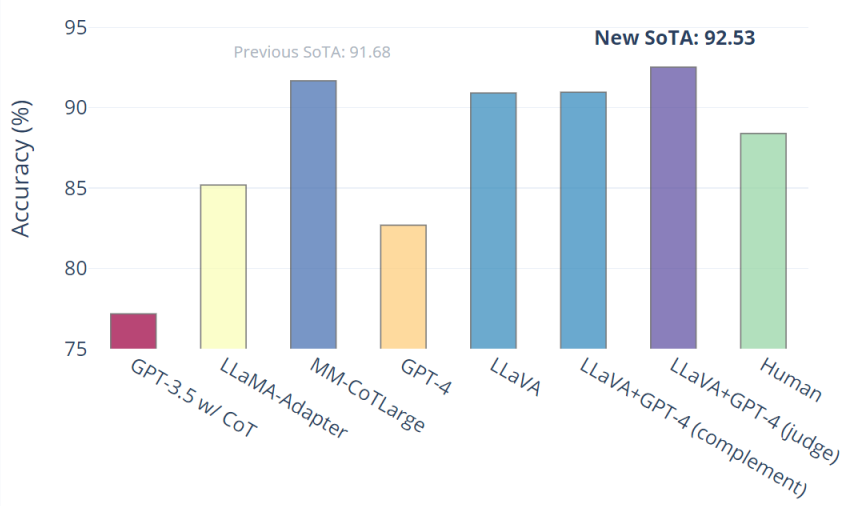

- Performance. Our early experiments show that LLaVA demonstrates impressive multimodel chat abilities, sometimes exhibiting the behaviors of multimodal GPT-4 on unseen images/instructions, and yields a 85.1% relative score compared with GPT-4 on a synthetic multimodal instruction-following dataset. When fine-tuned on Science QA, the synergy of LLaVA and GPT-4 achieves a new state-of-the-art accuracy of 92.53%.

- Open-source. We make GPT-4 generated visual instruction tuning data, our model and code base publicly available.

Multimodal Instrucion-Following Data

Multimodal Instrucion-Following Data

Based on the COCO dataset, we interact with langauge-only GPT-4, and collect 158K unique language-image instruction-following samples in total, including 58K in conversations, 23K in detailed description, and 77k in complex reasoning, respectively. Please check out ``LLaVA-Instruct-150K''' on [HuggingFace Dataset].

LLaVA: Large Language-and-Vision Assistant

LLaVA: Large Language-and-Vision Assistant

LLaVa connects pre-trained CLIP ViT-L/14 visual encoder and large language model LLaMA, using a simple projection matrix. We consider a two-stage instruction-tuning procedure:

- Stage 1: Pre-training for Feature Alignment. Only the projection matrix is updated, based on a subset of CC3M.

- Stage 2: Fine-tuning End-to-End.. Both the projection matrix and LLM are updated for two different use senarios:

- Visual Chat: LLaVA is fine-tuned on our generated multimodal instruction-following data for daily user-oriented applications.

- Science QA: LLaVA is fine-tuned on this multimodal reasonsing dataset for the science domain.

Performance

Performance

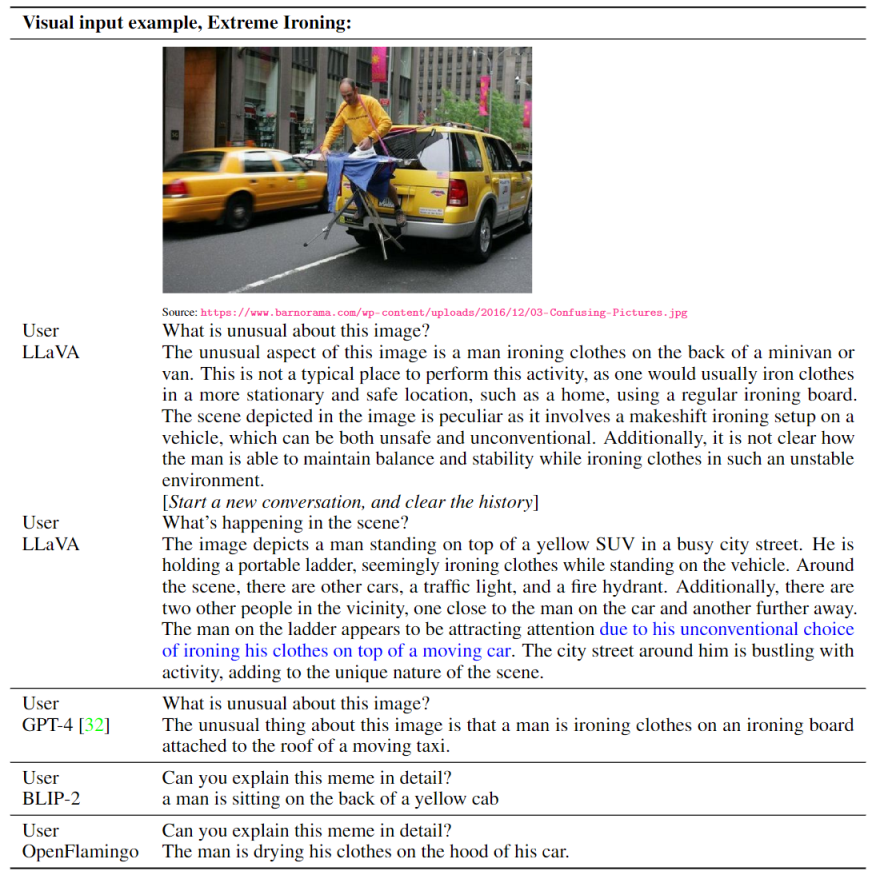

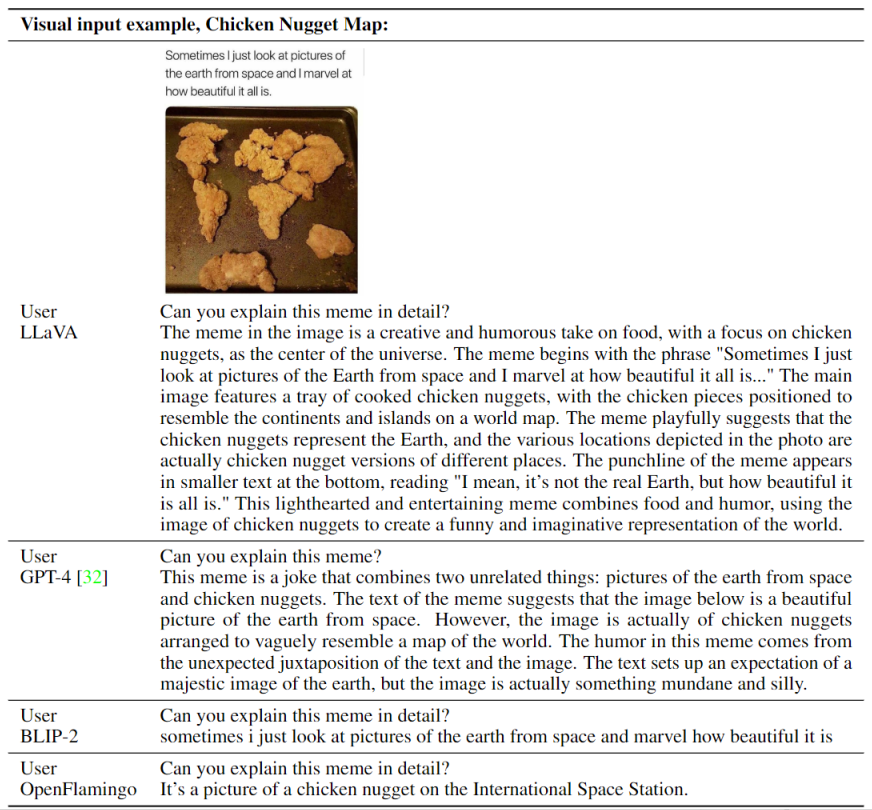

Visual Chat: Towards building multimodal GPT-4 level chatbot

Visual Chat: Towards building multimodal GPT-4 level chatbot

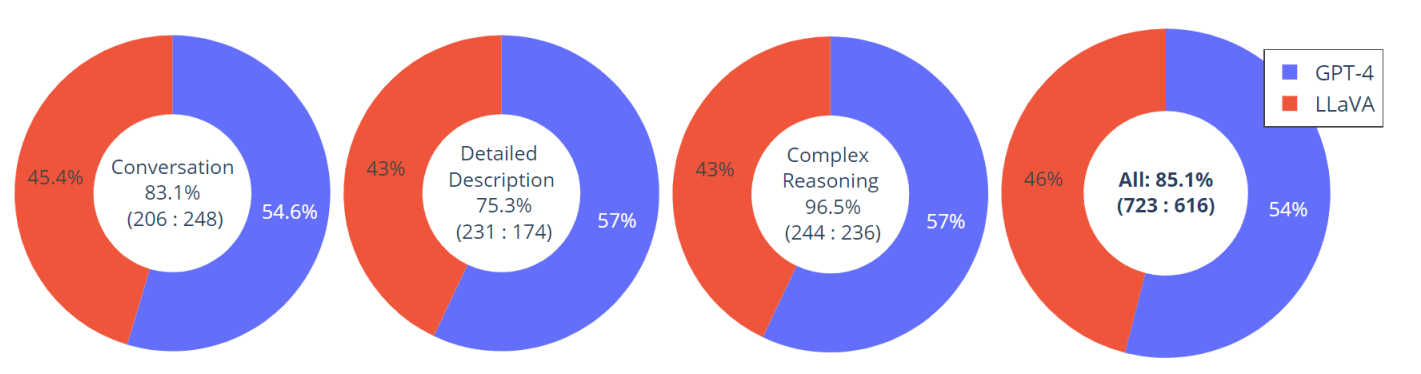

An evaluation dataset with 30 unseen images is constructed: each image is assocaited with three types of instructions: conversation, detailed description and complex reasoning. This leads to 90 new language-image instructions, on which we test LLaVA and GPT-4, and use GPT-4 to rate their responses from score 1 to 10. The summed score and relative score per type is reported. Overall, LLaVA achieves 85.1% relative score compared with GPT-4, indicating the effectinvess of the proposed self-instruct method in multimodal settings

Examples on Visual Instruction Following

Visual Reasoning on two examples from OpenAI GPT-4 Technical Report

Optical character recognition (OCR)

User

User

Do you know who drew this painting?

LLaVA

The painting depicts a woman, commonly believed to be Mona Lisa, the famous artwork by Leonardo da Vinci. It is a portrait painting that showcases the woman's enigmatic smile and has become one of the most famous and iconic art pieces in the world. The original work is displayed in the Louvre Museum in Paris, and it is known for its intricate details, use of oil paint, and the artist's innovative techniques that contributed to its enduring appeal and mystery.

BibTeX

@article{liu2023llava,

author = {Liu, Haotian and Li, Chunyuan and Wu, Qingyang and Lee, Yong Jae},

title = {Visual Instruction Tuning},

publisher = {arXiv:2304.08485},

year = {2023}

}

Acknowledgement

This website is adapted from Nerfies, licensed under a Creative Commons Attribution-ShareAlike 4.0 International License. We thank the LLaMA team for giving us access to their models, and open-source projects, including Alpaca and Vicuna.

Usage and License Notices: The data, code and checkpoint is intended and licensed for research use only. They are also restricted to uses that follow the license agreement of CLIP, LLaMA, Vicuna and GPT-4. The dataset is CC BY NC 4.0 (allowing only non-commercial use) and models trained using the dataset should not be used outside of research purposes.

Related Links: [REACT] [GLIGEN] [Computer Vision in the Wild (CVinW)] [Insutrction Tuning with GPT-4]

Recommend

About Joyk

Aggregate valuable and interesting links.

Joyk means Joy of geeK

Science QA: New SoTA with the synergy of LLaVA with GPT-4

Science QA: New SoTA with the synergy of LLaVA with GPT-4