深度学习之残差网络 - 故y

source link: https://www.cnblogs.com/kk-style/p/16988417.html

Go to the source link to view the article. You can view the picture content, updated content and better typesetting reading experience. If the link is broken, please click the button below to view the snapshot at that time.

深度学习之残差网络

链接:https://pan.baidu.com/s/1mTqblxzWcYIRF7_kk8MQQA

提取码:7x6w

资料的下载真的很感谢(14条消息) 【中文】【吴恩达课后编程作业】Course 4 - 卷积神经网络 - 第二周作业_何宽的博客-CSDN博客

【博主使用的python版本:3.6.8】

对于此作业,您将使用 Keras。

在进入问题之前,请运行下面的单元格以加载所需的包。

import tensorflow as tf import numpy as np import scipy.misc from tensorflow.keras.applications.resnet_v2 import ResNet50V2 from tensorflow.keras.preprocessing import image from tensorflow.keras.applications.resnet_v2 import preprocess_input, decode_predictions from tensorflow.keras import layers from tensorflow.keras.layers import Input, Add, Dense, Activation, ZeroPadding2D, BatchNormalization, Flatten, Conv2D, AveragePooling2D, MaxPooling2D, GlobalMaxPooling2D from tensorflow.keras.models import Model, load_model from resnets_utils import * from tensorflow.keras.initializers import random_uniform, glorot_uniform, constant, identity from tensorflow.python.framework.ops import EagerTensor from matplotlib.pyplot import imshow from test_utils import summary, comparator import public_tests

- 非常深的神经网络的问题

- 非常深度网络的主要好处是它可以表示非常复杂的功能。它还可以学习许多不同抽象级别的特征,从边缘(在较浅的层,更接近输入)到非常复杂的特征(在更深的层,更接近输出)。

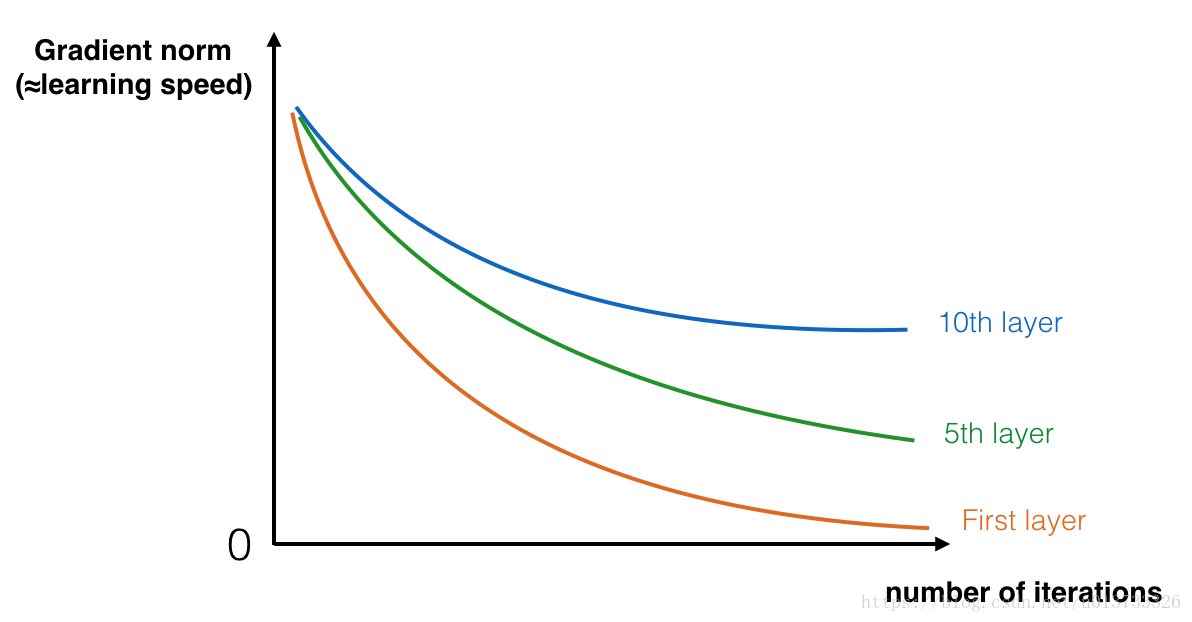

- 但是,使用更深的网络并不总是有帮助。训练梯度的一个巨大障碍是梯度消失:非常深的网络通常有一个梯度信号,很快就会归零,从而使梯度下降变得非常慢。

- 更具体地说,在梯度下降期间,当您从最后一层反向传播回第一层时,您将乘以每一步的权重矩阵,因此梯度可以呈指数级迅速减小到零(或者在极少数情况下,指数级快速增长并“爆炸”,因为获得非常大的值)。

- 因此,在训练过程中,您可能会看到随着训练的进行,较浅层的梯度的大小(或范数)会非常迅速地减小到零,如下所示:

构建一个残差网络

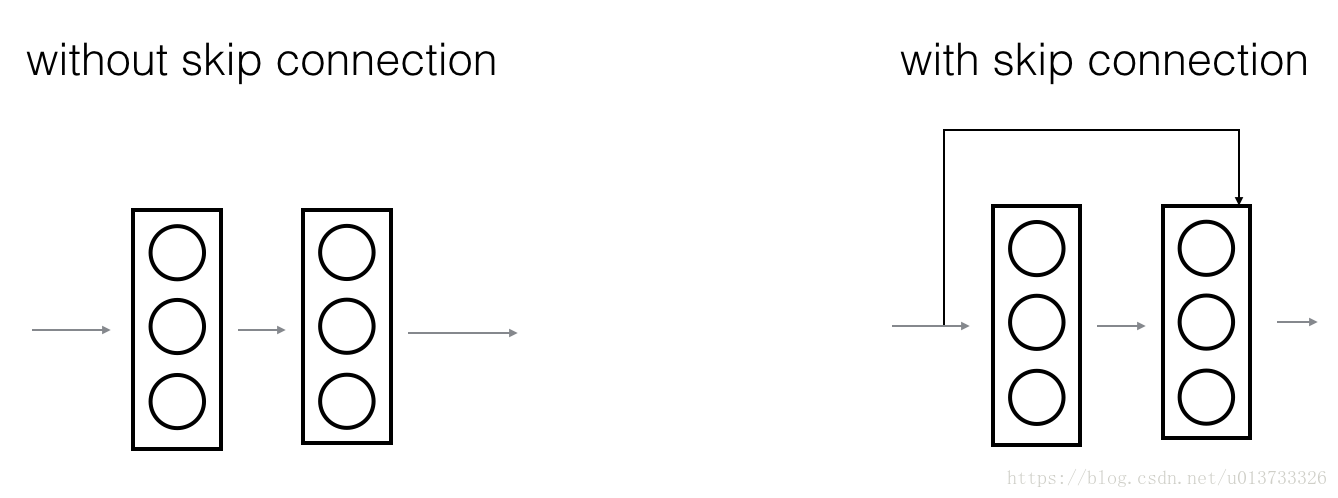

- 左图显示了通过网络的“主要路径”。右侧的图像将快捷方式添加到主路径。通过将这些 ResNet 块堆叠在一起,您可以形成一个非常深的网络。

- 讲座提到,使用带有快捷方式的 ResNet 块也使其中一个块学习恒等函数变得非常容易。这意味着您可以堆叠额外的 ResNet 块,而几乎没有损害训练集性能的风险。

- 在这一点上,还有一些证据表明,学习恒等函数的便利性解释了ResNets的卓越性能,甚至超过了跳过连接对梯度消失的帮助。

- ResNet 中使用两种主要类型的块,主要取决于输入/输出尺寸是相同还是不同。您将实现它们:“标识块”和“卷积块”。

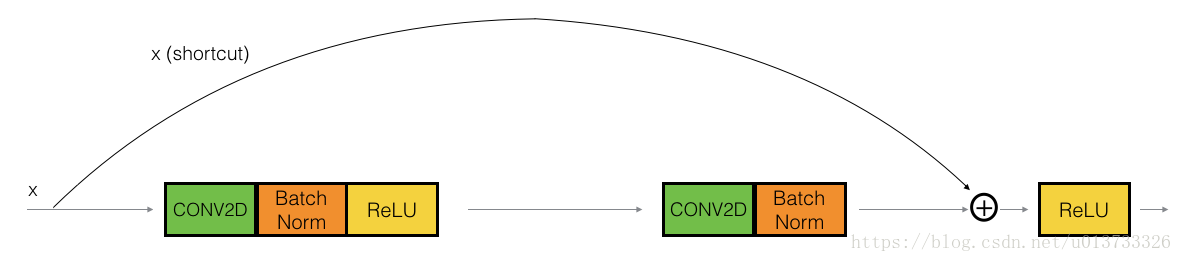

恒等块(Identity block)

恒等块是 ResNet 中使用的标准块,对应于输入激活(例如a[L])与输出激活(例如a[L+!])具有相同维度的情况。为了充实 ResNet 身份块中发生的不同步骤,下面是一个显示各个步骤的替代图表:

上面的路径是“捷径”。较低的路径是“主要路径”。在此图中,请注意每层中的 CONV2D 和 ReLU 步骤。为了加快训练速度,添加了 BatchNorm 步骤。不要担心这很难实现 - 你会看到BatchNorm只是Keras中的一行代码!

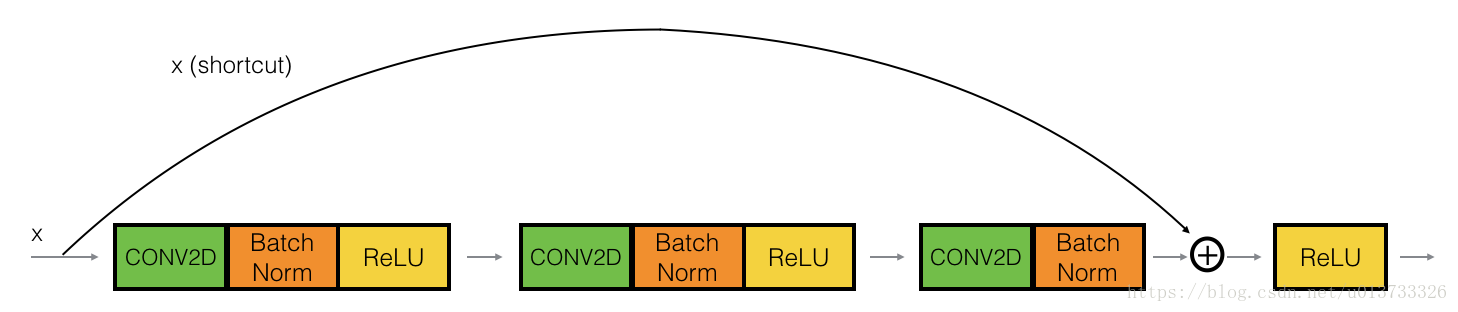

在本练习中,您将实际实现此标识块的一个功能稍微强大的版本,其中跳过连接“跳过”3 个隐藏层而不是 2 个层。它看起来像这样:

主路径的第一部分:

- 第一个 CONV2D 具有F1形状为 (1,1) 和步幅为 (1,1) 的过滤器。它的填充是“有效的”。使用 0 作为随机统一初始化的种子:

kernel_initializer = initializer(seed=0). - 第一个 BatchNorm 是规范化“channel”轴。

- 然后应用 ReLU 激活函数。这没有超参数。

主路径的第二部分:

- 第二个 CONV2D 具有F2形状(f,f)和步幅为 (1,1) 的过滤器。它的填充是“相同的”。使用 0 作为随机统一初始化的种子:kernel_initializer = initializer(seed=0)

- 第二个 BatchNorm 是规范化“channel”轴。

- 然后应用 ReLU 激活函数。这没有超参数。

主路径的第三部分:

- 第三个 CONV2D 具有F3形状 (1,1) 和步幅为 (1,1) 的过滤器。它的填充是“相同的”。使用 0 作为随机统一初始化的种子:kernel_initializer = initializer(seed=0)

- 第三个 BatchNorm 是规范化“channel”轴。

- 然后应用 ReLU 激活函数。这没有超参数。

主路径的最后一部分:

- 第 3 层 X 的X_shortcut和输出相加。

- 提示:语法看起来像 Add()([var1,var2])

- 然后应用 ReLU 激活函数。这没有超参数。

接下来我们就要实现残差网络的恒等块了

我们已将初始值设定项参数添加到函数中。此参数接收一个初始值设定项函数,类似于包 tensorflow.keras.initializers 或任何其他自定义初始值设定项中包含的函数。默认情况下,它将设置为random_uniform

请记住,这些函数接受种子参数,该参数可以是所需的任何值,但在此笔记本中必须将其设置为 0 才能进行评分。

下面是实际使用函数式 API 的强大功能来创建快捷方式路径的地方:

def identity_block(X, f, filters, training=True, initializer=random_uniform):

"""

实现图 4 中定义的恒等块

Arguments:

X -- 形状的输入张量(m、n_H_prev、n_W_prev、n_C_prev)

f -- 整数,指定主路径中间 CONV 窗口的形状

filters -- python 整数列表,定义主路径的 CONV 层中的过滤器数量

训练 -- True:在训练模式下行为

错误:在推理模式下行为

初始值设定项 -- 设置图层的初始权重。等于随机统一初始值设定项

Returns:

X -- output of the identity block, tensor of shape (n_H, n_W, n_C)

"""

# Retrieve Filters

F1, F2, F3 = filters

# Save the input value. You'll need this later to add back to the main path.

X_shortcut = X

cache = []

# 主路径的第一个组成部分

X = Conv2D(filters = F1, kernel_size = 1, strides = (1,1), padding = 'valid', kernel_initializer = initializer(seed=0))(X)

X = BatchNormalization(axis = 3)(X, training = training) # Default axis

X = Activation('relu')(X)

### START CODE HERE

## Second component of main path (≈3 lines)

X = Conv2D(filters = F2, kernel_size = f,strides = (1, 1),padding='same',kernel_initializer = initializer(seed=0))(X)

X = BatchNormalization(axis = 3)(X, training=training)

X = Activation('relu')(X)

## Third component of main path (≈2 lines)

X = Conv2D(filters = F3, kernel_size = 1, strides = (1, 1), padding='valid', kernel_initializer = initializer(seed=0))(X)

X = BatchNormalization(axis = 3)(X, training=training)

## Final step: Add shortcut value to main path, and pass it through a RELU activation (≈2 lines)

X = Add()([X_shortcut,X])

X = Activation('relu')(X)

### END CODE HERE

return X

我们来测试一下:

np.random.seed(1)

X1 = np.ones((1, 4, 4, 3)) * -1

X2 = np.ones((1, 4, 4, 3)) * 1

X3 = np.ones((1, 4, 4, 3)) * 3

#按着X1,X2,X3的顺序排序

X = np.concatenate((X1, X2, X3), axis = 0).astype(np.float32)

A3 = identity_block(X, f=2, filters=[4, 4, 3],

initializer=lambda seed=0:constant(value=1),

training=False)

print('\033[1mWith training=False\033[0m\n')

A3np = A3.numpy()

print(np.around(A3.numpy()[:,(0,-1),:,:].mean(axis = 3), 5))

resume = A3np[:,(0,-1),:,:].mean(axis = 3)

print(resume[1, 1, 0])

print('\n\033[1mWith training=True\033[0m\n')

np.random.seed(1)

A4 = identity_block(X, f=2, filters=[3, 3, 3],

initializer=lambda seed=0:constant(value=1),

training=True)

print(np.around(A4.numpy()[:,(0,-1),:,:].mean(axis = 3), 5))

public_tests.identity_block_test(identity_block)

With training=False [[[ 0. 0. 0. 0. ] [ 0. 0. 0. 0. ]] [[192.71234 192.71234 192.71234 96.85617] [ 96.85617 96.85617 96.85617 48.92808]] [[578.1371 578.1371 578.1371 290.5685 ] [290.5685 290.5685 290.5685 146.78426]]] 96.85617 With training=True [[[0. 0. 0. 0. ] [0. 0. 0. 0. ]] [[0.40739 0.40739 0.40739 0.40739] [0.40739 0.40739 0.40739 0.40739]] [[4.99991 4.99991 4.99991 3.25948] [3.25948 3.25948 3.25948 2.40739]]] All tests passed!

ResNet“卷积块”是第二种块类型。当输入和输出维度不匹配时,可以使用这种类型的块。与标识块的区别在于快捷方式路径中有一个 CONV2D 层:

- 快捷路径中的 CONV2D 层用于将输入调整为不同的维度,以便尺寸在将快捷方式值添加回主路径所需的最终添加中匹配。(这与讲座中讨论的矩阵的作用类似。)

- 例如,要将激活维度的高度和宽度减少 2 倍,可以使用步幅为 2 的 1x1 卷积。

- 快捷方式路径上的 CONV2D 层不使用任何非线性激活函数。它的主要作用是仅应用一个(学习的)线性函数来减小输入的维度,以便维度与后面的加法步骤相匹配。

- 对于前面的练习,出于评分目的需要额外的初始值设定项参数,并且默认情况下已将其设置为 glorot_uniform

主路径的第一个组成部分:

- 第一个 CONV2D 具有F1个形状为 (1,1) 和步幅为 (s,s) 的过滤器。它的填充是“有效的”。使用 0 作为种子glorot_uniform kernel_initializer = 初始值设定项(seed=0)。

- 第一个 BatchNorm 是规范化“通道”轴。

- 然后应用 ReLU 激活函数。这没有超参数。

主路径的第二个组成部分:

- 第二个 CONV2D 具有F2个形状 (f,f) 和步幅为 (1,1) 的过滤器。它的填充是“相同的”。使用 0 作为种子glorot_uniform kernel_initializer = 初始值设定项(seed=0)。

- 第二个 BatchNorm 是规范化“通道”轴。

- 然后应用 ReLU 激活函数。这没有超参数。

主路径的第三个组成部分:

- 第三个 CONV2D 具有F3个形状为 (1,1) 和步幅为 (1,1) 的过滤器。它的填充是“有效的”。使用 0 作为种子glorot_uniform kernel_initializer = 初始值设定项(seed=0)。

- 第三个 BatchNorm 是规范化“通道”轴。请注意,此组件中没有 ReLU 激活函数。

快捷方式路径:

- CONV2D 具有F3个形状为 (1,1) 和步幅为 (s,s) 的过滤器。它的填充是“有效的”。使用 0 作为种子glorot_uniform kernel_initializer = 初始值设定项(seed=0)。

- BatchNorm正在规范化“通道”轴。

最后一步:

- 快捷方式和主路径值相加。

- 然后应用 ReLU 激活函数。这没有超参数。

def convolutional_block(X, f, filters, s = 2, training=True, initializer=glorot_uniform):

"""

图 4 中定义的卷积块的实现

Arguments:

X -- 形状的输入张量(m、n_H_prev、n_W_prev、n_C_prev)

f -- 整数,指定主路径中间 CONV 窗口的形状

filters -- python 整数列表,定义主路径的 CONV 层中的过滤器数量

s -- 整数,指定要使用的步幅

训练 -- True:在训练模式下行为

错误:在推理模式下行为

初始值设定项 -- 设置图层的初始权重。等于 Glorot 统一初始值设定项,

也称为泽维尔均匀初始值设定项。

Returns:

X -- output of the convolutional block, tensor of shape (n_H, n_W, n_C)

"""

# Retrieve Filters

F1, F2, F3 = filters

# Save the input value

X_shortcut = X

##### MAIN PATH #####

# First component of main path glorot_uniform(seed=0)

X = Conv2D(filters = F1, kernel_size = 1, strides = (s, s), padding='valid', kernel_initializer = initializer(seed=0))(X)

X = BatchNormalization(axis = 3)(X, training=training)

X = Activation('relu')(X)

### START CODE HERE

## Second component of main path (≈3 lines)

X = Conv2D(filters = F2, kernel_size = f,strides = (1, 1),padding='same',kernel_initializer = initializer(seed=0))(X)

X = BatchNormalization(axis = 3)(X, training=training)

X = Activation('relu')(X)

## Third component of main path (≈2 lines)

X = Conv2D(filters = F3, kernel_size = 1, strides = (1, 1), padding='valid', kernel_initializer = initializer(seed=0))(X)

X = BatchNormalization(axis = 3)(X, training=training)

##### SHORTCUT PATH ##### (≈2 lines)

X_shortcut = Conv2D(filters = F3, kernel_size = 1, strides = (s, s), padding='valid', kernel_initializer = initializer(seed=0))(X_shortcut)

X_shortcut = BatchNormalization(axis = 3)(X_shortcut, training=training)

### END CODE HERE

# Final step: Add shortcut value to main path (Use this order [X, X_shortcut]), and pass it through a RELU activation

X = Add()([X, X_shortcut])

X = Activation('relu')(X)

return X

我们测试一下:

from outputs import convolutional_block_output1, convolutional_block_output2

np.random.seed(1)

#X = np.random.randn(3, 4, 4, 6).astype(np.float32)

X1 = np.ones((1, 4, 4, 3)) * -1

X2 = np.ones((1, 4, 4, 3)) * 1

X3 = np.ones((1, 4, 4, 3)) * 3

X = np.concatenate((X1, X2, X3), axis = 0).astype(np.float32)

A = convolutional_block(X, f = 2, filters = [2, 4, 6], training=False)

assert type(A) == EagerTensor, "Use only tensorflow and keras functions"

assert tuple(tf.shape(A).numpy()) == (3, 2, 2, 6), "Wrong shape."

assert np.allclose(A.numpy(), convolutional_block_output1), "Wrong values when training=False."

print(A[0])

B = convolutional_block(X, f = 2, filters = [2, 4, 6], training=True)

assert np.allclose(B.numpy(), convolutional_block_output2), "Wrong values when training=True."

print('\033[92mAll tests passed!')

tf.Tensor( [[[0. 0.66683817 0. 0. 0.88853896 0.5274254 ] [0. 0.65053666 0. 0. 0.89592844 0.49965227]] [[0. 0.6312079 0. 0. 0.8636247 0.47643146] [0. 0.5688321 0. 0. 0.85534114 0.41709304]]], shape=(2, 2, 6), dtype=float32) All tests passed!

构建你的第一个残差网络(50层)

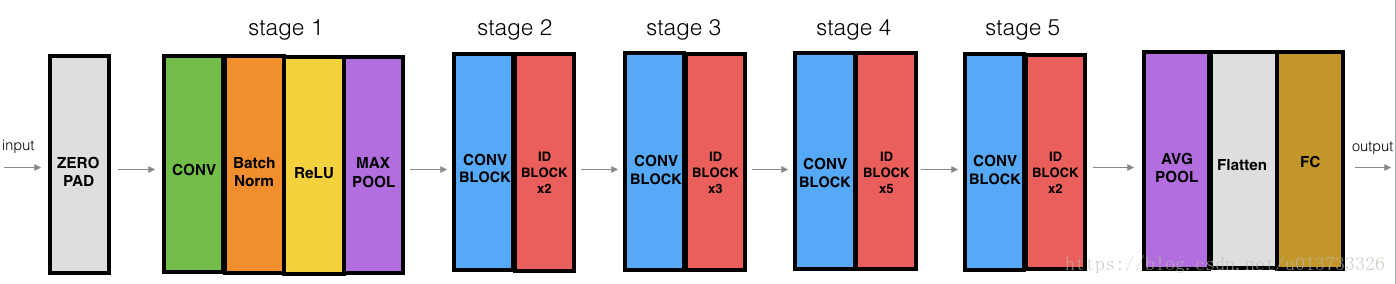

您现在拥有构建非常深入的 ResNet 所需的块。下图详细描述了该神经网络的架构。图中的“ID BLOCK”代表“身份块”,“ID BLOCK x3”表示您应该将 3 个恒等快块堆叠在一起。

零填充用 (3,3) 的填充填充输入

- 2D 卷积有 64 个形状为 (7,7) 的过滤器,并使用 (2,2) 的步幅。

- BatchNorm 应用于输入的“通道”轴。

- 最大池化使用 (3,3) 窗口和 (2,2) 步幅。

- 卷积块使用三组大小为 [64,64,256] 的过滤器,“f”为 3,“s” 为 1。

- 2 个身份块使用三组大小为 [64,64,256] 的过滤器,“f”为 3。

- 卷积块使用三组大小为 [128,128,512] 的过滤器,“f”为 3,“s” 为 2。

- 3 个身份块使用三组大小为 [128,128,512] 的过滤器,“f”为 3。

- 卷积块使用三组大小为 [256, 256, 1024] 的滤波器,“f” 为 3,“s” 为 2。

- 这 5 个身份块使用三组大小为 [256、256、1024] 的过滤器,“f”为 3。

- 卷积块使用三组大小为 [512, 512, 2048] 的滤波器,“f”为 3,“s” 为 2。

- 2 个身份块使用三组大小为 [512, 512, 2048] 的过滤器,“f”为 3。

二维平均池化使用形状为 (2,2) 的窗口。

“扁平化”层没有任何超参数。

全连接(密集)层使用 softmax 激活将其输入减少到类数。

def ResNet50(input_shape = (64, 64, 3), classes = 6):

"""

流行的 ResNet50 架构的分阶段实现:

CONV2D -> BATCHNORM -> RELU -> MAXPOOL -> CONVBLOCK -> IDBLOCK*2 -> CONVBLOCK -> IDBLOCK*3

-> CONVBLOCK -> IDBLOCK*5 -> CONVBLOCK -> IDBLOCK*2 -> AVGPOOL -> FLATTEN -> DENSE

Arguments:

input_shape -- shape of the images of the dataset

classes -- integer, number of classes

Returns:

model -- a Model() instance in Keras

"""

# Define the input as a tensor with shape input_shape

X_input = Input(input_shape)

# Zero-Padding

X = ZeroPadding2D((3, 3))(X_input)

# Stage 1

X = Conv2D(64, (7, 7), strides = (2, 2), kernel_initializer = glorot_uniform(seed=0))(X)

X = BatchNormalization(axis = 3)(X)

X = Activation('relu')(X)

X = MaxPooling2D((3, 3), strides=(2, 2))(X)

# Stage 2

X = convolutional_block(X, f = 3, filters = [64, 64, 256], s = 1)

X = identity_block(X, 3, [64, 64, 256])

X = identity_block(X, 3, [64, 64, 256])

### START CODE HERE

## Stage 3 (≈4 lines)

X = convolutional_block(X, f = 3, filters = [128,128,512], s = 2)

X = identity_block(X, 3, [128,128,512])

X = identity_block(X, 3, [128,128,512])

X = identity_block(X, 3, [128,128,512])

## Stage 4 (≈6 lines)

X = convolutional_block(X, f = 3, filters = [256, 256, 1024], s = 2)

X = identity_block(X, 3, [256, 256, 1024])

X = identity_block(X, 3, [256, 256, 1024])

X = identity_block(X, 3, [256, 256, 1024])

X = identity_block(X, 3, [256, 256, 1024])

X = identity_block(X, 3, [256, 256, 1024])

## Stage 5 (≈3 lines)

X = convolutional_block(X, f = 3, filters = [512, 512, 2048], s = 2)

X = identity_block(X, 3, [512, 512, 2048])

X = identity_block(X, 3, [512, 512, 2048])

## AVGPOOL (≈1 line). Use "X = AveragePooling2D(...)(X)"

X = AveragePooling2D((2, 2))(X)

### END CODE HERE

# output layer

X = Flatten()(X)

X = Dense(classes, activation='softmax', kernel_initializer = glorot_uniform(seed=0))(X)

# Create model

model = Model(inputs = X_input, outputs = X)

return model

模型建立完成了,我们来看一下参数

model = ResNet50(input_shape = (64, 64, 3), classes = 6) print(model.summary())

Model: "model"

__________________________________________________________________________________________________

Layer (type) Output Shape Param # Connected to

==================================================================================================

input_1 (InputLayer) [(None, 64, 64, 3)] 0

__________________________________________________________________________________________________

zero_padding2d (ZeroPadding2D) (None, 70, 70, 3) 0 input_1[0][0]

__________________________________________________________________________________________________

conv2d_20 (Conv2D) (None, 32, 32, 64) 9472 zero_padding2d[0][0]

__________________________________________________________________________________________________

batch_normalization_20 (BatchNo (None, 32, 32, 64) 256 conv2d_20[0][0]

__________________________________________________________________________________________________

activation_18 (Activation) (None, 32, 32, 64) 0 batch_normalization_20[0][0]

__________________________________________________________________________________________________

max_pooling2d (MaxPooling2D) (None, 15, 15, 64) 0 activation_18[0][0]

__________________________________________________________________________________________________

conv2d_21 (Conv2D) (None, 15, 15, 64) 4160 max_pooling2d[0][0]

__________________________________________________________________________________________________

batch_normalization_21 (BatchNo (None, 15, 15, 64) 256 conv2d_21[0][0]

__________________________________________________________________________________________________

activation_19 (Activation) (None, 15, 15, 64) 0 batch_normalization_21[0][0]

__________________________________________________________________________________________________

conv2d_22 (Conv2D) (None, 15, 15, 64) 36928 activation_19[0][0]

__________________________________________________________________________________________________

batch_normalization_22 (BatchNo (None, 15, 15, 64) 256 conv2d_22[0][0]

__________________________________________________________________________________________________

activation_20 (Activation) (None, 15, 15, 64) 0 batch_normalization_22[0][0]

__________________________________________________________________________________________________

conv2d_23 (Conv2D) (None, 15, 15, 256) 16640 activation_20[0][0]

__________________________________________________________________________________________________

conv2d_24 (Conv2D) (None, 15, 15, 256) 16640 max_pooling2d[0][0]

__________________________________________________________________________________________________

batch_normalization_23 (BatchNo (None, 15, 15, 256) 1024 conv2d_23[0][0]

__________________________________________________________________________________________________

batch_normalization_24 (BatchNo (None, 15, 15, 256) 1024 conv2d_24[0][0]

__________________________________________________________________________________________________

add_6 (Add) (None, 15, 15, 256) 0 batch_normalization_23[0][0]

batch_normalization_24[0][0]

__________________________________________________________________________________________________

activation_21 (Activation) (None, 15, 15, 256) 0 add_6[0][0]

__________________________________________________________________________________________________

conv2d_25 (Conv2D) (None, 15, 15, 64) 16448 activation_21[0][0]

__________________________________________________________________________________________________

batch_normalization_25 (BatchNo (None, 15, 15, 64) 256 conv2d_25[0][0]

__________________________________________________________________________________________________

activation_22 (Activation) (None, 15, 15, 64) 0 batch_normalization_25[0][0]

__________________________________________________________________________________________________

conv2d_26 (Conv2D) (None, 15, 15, 64) 36928 activation_22[0][0]

__________________________________________________________________________________________________

batch_normalization_26 (BatchNo (None, 15, 15, 64) 256 conv2d_26[0][0]

__________________________________________________________________________________________________

activation_23 (Activation) (None, 15, 15, 64) 0 batch_normalization_26[0][0]

__________________________________________________________________________________________________

conv2d_27 (Conv2D) (None, 15, 15, 256) 16640 activation_23[0][0]

__________________________________________________________________________________________________

batch_normalization_27 (BatchNo (None, 15, 15, 256) 1024 conv2d_27[0][0]

__________________________________________________________________________________________________

add_7 (Add) (None, 15, 15, 256) 0 activation_21[0][0]

batch_normalization_27[0][0]

__________________________________________________________________________________________________

activation_24 (Activation) (None, 15, 15, 256) 0 add_7[0][0]

__________________________________________________________________________________________________

conv2d_28 (Conv2D) (None, 15, 15, 64) 16448 activation_24[0][0]

__________________________________________________________________________________________________

batch_normalization_28 (BatchNo (None, 15, 15, 64) 256 conv2d_28[0][0]

__________________________________________________________________________________________________

activation_25 (Activation) (None, 15, 15, 64) 0 batch_normalization_28[0][0]

__________________________________________________________________________________________________

conv2d_29 (Conv2D) (None, 15, 15, 64) 36928 activation_25[0][0]

__________________________________________________________________________________________________

batch_normalization_29 (BatchNo (None, 15, 15, 64) 256 conv2d_29[0][0]

__________________________________________________________________________________________________

activation_26 (Activation) (None, 15, 15, 64) 0 batch_normalization_29[0][0]

__________________________________________________________________________________________________

conv2d_30 (Conv2D) (None, 15, 15, 256) 16640 activation_26[0][0]

__________________________________________________________________________________________________

batch_normalization_30 (BatchNo (None, 15, 15, 256) 1024 conv2d_30[0][0]

__________________________________________________________________________________________________

add_8 (Add) (None, 15, 15, 256) 0 activation_24[0][0]

batch_normalization_30[0][0]

__________________________________________________________________________________________________

activation_27 (Activation) (None, 15, 15, 256) 0 add_8[0][0]

__________________________________________________________________________________________________

conv2d_31 (Conv2D) (None, 8, 8, 128) 32896 activation_27[0][0]

__________________________________________________________________________________________________

batch_normalization_31 (BatchNo (None, 8, 8, 128) 512 conv2d_31[0][0]

__________________________________________________________________________________________________

activation_28 (Activation) (None, 8, 8, 128) 0 batch_normalization_31[0][0]

__________________________________________________________________________________________________

conv2d_32 (Conv2D) (None, 8, 8, 128) 147584 activation_28[0][0]

__________________________________________________________________________________________________

batch_normalization_32 (BatchNo (None, 8, 8, 128) 512 conv2d_32[0][0]

__________________________________________________________________________________________________

activation_29 (Activation) (None, 8, 8, 128) 0 batch_normalization_32[0][0]

__________________________________________________________________________________________________

conv2d_33 (Conv2D) (None, 8, 8, 512) 66048 activation_29[0][0]

__________________________________________________________________________________________________

conv2d_34 (Conv2D) (None, 8, 8, 512) 131584 activation_27[0][0]

__________________________________________________________________________________________________

batch_normalization_33 (BatchNo (None, 8, 8, 512) 2048 conv2d_33[0][0]

__________________________________________________________________________________________________

batch_normalization_34 (BatchNo (None, 8, 8, 512) 2048 conv2d_34[0][0]

__________________________________________________________________________________________________

add_9 (Add) (None, 8, 8, 512) 0 batch_normalization_33[0][0]

batch_normalization_34[0][0]

__________________________________________________________________________________________________

activation_30 (Activation) (None, 8, 8, 512) 0 add_9[0][0]

__________________________________________________________________________________________________

conv2d_35 (Conv2D) (None, 8, 8, 128) 65664 activation_30[0][0]

__________________________________________________________________________________________________

batch_normalization_35 (BatchNo (None, 8, 8, 128) 512 conv2d_35[0][0]

__________________________________________________________________________________________________

activation_31 (Activation) (None, 8, 8, 128) 0 batch_normalization_35[0][0]

__________________________________________________________________________________________________

conv2d_36 (Conv2D) (None, 8, 8, 128) 147584 activation_31[0][0]

__________________________________________________________________________________________________

batch_normalization_36 (BatchNo (None, 8, 8, 128) 512 conv2d_36[0][0]

__________________________________________________________________________________________________

activation_32 (Activation) (None, 8, 8, 128) 0 batch_normalization_36[0][0]

__________________________________________________________________________________________________

conv2d_37 (Conv2D) (None, 8, 8, 512) 66048 activation_32[0][0]

__________________________________________________________________________________________________

batch_normalization_37 (BatchNo (None, 8, 8, 512) 2048 conv2d_37[0][0]

__________________________________________________________________________________________________

add_10 (Add) (None, 8, 8, 512) 0 activation_30[0][0]

batch_normalization_37[0][0]

__________________________________________________________________________________________________

activation_33 (Activation) (None, 8, 8, 512) 0 add_10[0][0]

__________________________________________________________________________________________________

conv2d_38 (Conv2D) (None, 8, 8, 128) 65664 activation_33[0][0]

__________________________________________________________________________________________________

batch_normalization_38 (BatchNo (None, 8, 8, 128) 512 conv2d_38[0][0]

__________________________________________________________________________________________________

activation_34 (Activation) (None, 8, 8, 128) 0 batch_normalization_38[0][0]

__________________________________________________________________________________________________

conv2d_39 (Conv2D) (None, 8, 8, 128) 147584 activation_34[0][0]

__________________________________________________________________________________________________

batch_normalization_39 (BatchNo (None, 8, 8, 128) 512 conv2d_39[0][0]

__________________________________________________________________________________________________

activation_35 (Activation) (None, 8, 8, 128) 0 batch_normalization_39[0][0]

__________________________________________________________________________________________________

conv2d_40 (Conv2D) (None, 8, 8, 512) 66048 activation_35[0][0]

__________________________________________________________________________________________________

batch_normalization_40 (BatchNo (None, 8, 8, 512) 2048 conv2d_40[0][0]

__________________________________________________________________________________________________

add_11 (Add) (None, 8, 8, 512) 0 activation_33[0][0]

batch_normalization_40[0][0]

__________________________________________________________________________________________________

activation_36 (Activation) (None, 8, 8, 512) 0 add_11[0][0]

__________________________________________________________________________________________________

conv2d_41 (Conv2D) (None, 8, 8, 128) 65664 activation_36[0][0]

__________________________________________________________________________________________________

batch_normalization_41 (BatchNo (None, 8, 8, 128) 512 conv2d_41[0][0]

__________________________________________________________________________________________________

activation_37 (Activation) (None, 8, 8, 128) 0 batch_normalization_41[0][0]

__________________________________________________________________________________________________

conv2d_42 (Conv2D) (None, 8, 8, 128) 147584 activation_37[0][0]

__________________________________________________________________________________________________

batch_normalization_42 (BatchNo (None, 8, 8, 128) 512 conv2d_42[0][0]

__________________________________________________________________________________________________

activation_38 (Activation) (None, 8, 8, 128) 0 batch_normalization_42[0][0]

__________________________________________________________________________________________________

conv2d_43 (Conv2D) (None, 8, 8, 512) 66048 activation_38[0][0]

__________________________________________________________________________________________________

batch_normalization_43 (BatchNo (None, 8, 8, 512) 2048 conv2d_43[0][0]

__________________________________________________________________________________________________

add_12 (Add) (None, 8, 8, 512) 0 activation_36[0][0]

batch_normalization_43[0][0]

__________________________________________________________________________________________________

activation_39 (Activation) (None, 8, 8, 512) 0 add_12[0][0]

__________________________________________________________________________________________________

conv2d_44 (Conv2D) (None, 4, 4, 256) 131328 activation_39[0][0]

__________________________________________________________________________________________________

batch_normalization_44 (BatchNo (None, 4, 4, 256) 1024 conv2d_44[0][0]

__________________________________________________________________________________________________

activation_40 (Activation) (None, 4, 4, 256) 0 batch_normalization_44[0][0]

__________________________________________________________________________________________________

conv2d_45 (Conv2D) (None, 4, 4, 256) 590080 activation_40[0][0]

__________________________________________________________________________________________________

batch_normalization_45 (BatchNo (None, 4, 4, 256) 1024 conv2d_45[0][0]

__________________________________________________________________________________________________

activation_41 (Activation) (None, 4, 4, 256) 0 batch_normalization_45[0][0]

__________________________________________________________________________________________________

conv2d_46 (Conv2D) (None, 4, 4, 1024) 263168 activation_41[0][0]

__________________________________________________________________________________________________

conv2d_47 (Conv2D) (None, 4, 4, 1024) 525312 activation_39[0][0]

__________________________________________________________________________________________________

batch_normalization_46 (BatchNo (None, 4, 4, 1024) 4096 conv2d_46[0][0]

__________________________________________________________________________________________________

batch_normalization_47 (BatchNo (None, 4, 4, 1024) 4096 conv2d_47[0][0]

__________________________________________________________________________________________________

add_13 (Add) (None, 4, 4, 1024) 0 batch_normalization_46[0][0]

batch_normalization_47[0][0]

__________________________________________________________________________________________________

activation_42 (Activation) (None, 4, 4, 1024) 0 add_13[0][0]

__________________________________________________________________________________________________

conv2d_48 (Conv2D) (None, 4, 4, 256) 262400 activation_42[0][0]

__________________________________________________________________________________________________

batch_normalization_48 (BatchNo (None, 4, 4, 256) 1024 conv2d_48[0][0]

__________________________________________________________________________________________________

activation_43 (Activation) (None, 4, 4, 256) 0 batch_normalization_48[0][0]

__________________________________________________________________________________________________

conv2d_49 (Conv2D) (None, 4, 4, 256) 590080 activation_43[0][0]

__________________________________________________________________________________________________

batch_normalization_49 (BatchNo (None, 4, 4, 256) 1024 conv2d_49[0][0]

__________________________________________________________________________________________________

activation_44 (Activation) (None, 4, 4, 256) 0 batch_normalization_49[0][0]

__________________________________________________________________________________________________

conv2d_50 (Conv2D) (None, 4, 4, 1024) 263168 activation_44[0][0]

__________________________________________________________________________________________________

batch_normalization_50 (BatchNo (None, 4, 4, 1024) 4096 conv2d_50[0][0]

__________________________________________________________________________________________________

add_14 (Add) (None, 4, 4, 1024) 0 activation_42[0][0]

batch_normalization_50[0][0]

__________________________________________________________________________________________________

activation_45 (Activation) (None, 4, 4, 1024) 0 add_14[0][0]

__________________________________________________________________________________________________

conv2d_51 (Conv2D) (None, 4, 4, 256) 262400 activation_45[0][0]

__________________________________________________________________________________________________

batch_normalization_51 (BatchNo (None, 4, 4, 256) 1024 conv2d_51[0][0]

__________________________________________________________________________________________________

activation_46 (Activation) (None, 4, 4, 256) 0 batch_normalization_51[0][0]

__________________________________________________________________________________________________

conv2d_52 (Conv2D) (None, 4, 4, 256) 590080 activation_46[0][0]

__________________________________________________________________________________________________

batch_normalization_52 (BatchNo (None, 4, 4, 256) 1024 conv2d_52[0][0]

__________________________________________________________________________________________________

activation_47 (Activation) (None, 4, 4, 256) 0 batch_normalization_52[0][0]

__________________________________________________________________________________________________

conv2d_53 (Conv2D) (None, 4, 4, 1024) 263168 activation_47[0][0]

__________________________________________________________________________________________________

batch_normalization_53 (BatchNo (None, 4, 4, 1024) 4096 conv2d_53[0][0]

__________________________________________________________________________________________________

add_15 (Add) (None, 4, 4, 1024) 0 activation_45[0][0]

batch_normalization_53[0][0]

__________________________________________________________________________________________________

activation_48 (Activation) (None, 4, 4, 1024) 0 add_15[0][0]

__________________________________________________________________________________________________

conv2d_54 (Conv2D) (None, 4, 4, 256) 262400 activation_48[0][0]

__________________________________________________________________________________________________

batch_normalization_54 (BatchNo (None, 4, 4, 256) 1024 conv2d_54[0][0]

__________________________________________________________________________________________________

activation_49 (Activation) (None, 4, 4, 256) 0 batch_normalization_54[0][0]

__________________________________________________________________________________________________

conv2d_55 (Conv2D) (None, 4, 4, 256) 590080 activation_49[0][0]

__________________________________________________________________________________________________

batch_normalization_55 (BatchNo (None, 4, 4, 256) 1024 conv2d_55[0][0]

__________________________________________________________________________________________________

activation_50 (Activation) (None, 4, 4, 256) 0 batch_normalization_55[0][0]

__________________________________________________________________________________________________

conv2d_56 (Conv2D) (None, 4, 4, 1024) 263168 activation_50[0][0]

__________________________________________________________________________________________________

batch_normalization_56 (BatchNo (None, 4, 4, 1024) 4096 conv2d_56[0][0]

__________________________________________________________________________________________________

add_16 (Add) (None, 4, 4, 1024) 0 activation_48[0][0]

batch_normalization_56[0][0]

__________________________________________________________________________________________________

activation_51 (Activation) (None, 4, 4, 1024) 0 add_16[0][0]

__________________________________________________________________________________________________

conv2d_57 (Conv2D) (None, 4, 4, 256) 262400 activation_51[0][0]

__________________________________________________________________________________________________

batch_normalization_57 (BatchNo (None, 4, 4, 256) 1024 conv2d_57[0][0]

__________________________________________________________________________________________________

activation_52 (Activation) (None, 4, 4, 256) 0 batch_normalization_57[0][0]

__________________________________________________________________________________________________

conv2d_58 (Conv2D) (None, 4, 4, 256) 590080 activation_52[0][0]

__________________________________________________________________________________________________

batch_normalization_58 (BatchNo (None, 4, 4, 256) 1024 conv2d_58[0][0]

__________________________________________________________________________________________________

activation_53 (Activation) (None, 4, 4, 256) 0 batch_normalization_58[0][0]

__________________________________________________________________________________________________

conv2d_59 (Conv2D) (None, 4, 4, 1024) 263168 activation_53[0][0]

__________________________________________________________________________________________________

batch_normalization_59 (BatchNo (None, 4, 4, 1024) 4096 conv2d_59[0][0]

__________________________________________________________________________________________________

add_17 (Add) (None, 4, 4, 1024) 0 activation_51[0][0]

batch_normalization_59[0][0]

__________________________________________________________________________________________________

activation_54 (Activation) (None, 4, 4, 1024) 0 add_17[0][0]

__________________________________________________________________________________________________

conv2d_60 (Conv2D) (None, 4, 4, 256) 262400 activation_54[0][0]

__________________________________________________________________________________________________

batch_normalization_60 (BatchNo (None, 4, 4, 256) 1024 conv2d_60[0][0]

__________________________________________________________________________________________________

activation_55 (Activation) (None, 4, 4, 256) 0 batch_normalization_60[0][0]

__________________________________________________________________________________________________

conv2d_61 (Conv2D) (None, 4, 4, 256) 590080 activation_55[0][0]

__________________________________________________________________________________________________

batch_normalization_61 (BatchNo (None, 4, 4, 256) 1024 conv2d_61[0][0]

__________________________________________________________________________________________________

activation_56 (Activation) (None, 4, 4, 256) 0 batch_normalization_61[0][0]

__________________________________________________________________________________________________

conv2d_62 (Conv2D) (None, 4, 4, 1024) 263168 activation_56[0][0]

__________________________________________________________________________________________________

batch_normalization_62 (BatchNo (None, 4, 4, 1024) 4096 conv2d_62[0][0]

__________________________________________________________________________________________________

add_18 (Add) (None, 4, 4, 1024) 0 activation_54[0][0]

batch_normalization_62[0][0]

__________________________________________________________________________________________________

activation_57 (Activation) (None, 4, 4, 1024) 0 add_18[0][0]

__________________________________________________________________________________________________

conv2d_63 (Conv2D) (None, 2, 2, 512) 524800 activation_57[0][0]

__________________________________________________________________________________________________

batch_normalization_63 (BatchNo (None, 2, 2, 512) 2048 conv2d_63[0][0]

__________________________________________________________________________________________________

activation_58 (Activation) (None, 2, 2, 512) 0 batch_normalization_63[0][0]

__________________________________________________________________________________________________

conv2d_64 (Conv2D) (None, 2, 2, 512) 2359808 activation_58[0][0]

__________________________________________________________________________________________________

batch_normalization_64 (BatchNo (None, 2, 2, 512) 2048 conv2d_64[0][0]

__________________________________________________________________________________________________

activation_59 (Activation) (None, 2, 2, 512) 0 batch_normalization_64[0][0]

__________________________________________________________________________________________________

conv2d_65 (Conv2D) (None, 2, 2, 2048) 1050624 activation_59[0][0]

__________________________________________________________________________________________________

conv2d_66 (Conv2D) (None, 2, 2, 2048) 2099200 activation_57[0][0]

__________________________________________________________________________________________________

batch_normalization_65 (BatchNo (None, 2, 2, 2048) 8192 conv2d_65[0][0]

__________________________________________________________________________________________________

batch_normalization_66 (BatchNo (None, 2, 2, 2048) 8192 conv2d_66[0][0]

__________________________________________________________________________________________________

add_19 (Add) (None, 2, 2, 2048) 0 batch_normalization_65[0][0]

batch_normalization_66[0][0]

__________________________________________________________________________________________________

activation_60 (Activation) (None, 2, 2, 2048) 0 add_19[0][0]

__________________________________________________________________________________________________

conv2d_67 (Conv2D) (None, 2, 2, 512) 1049088 activation_60[0][0]

__________________________________________________________________________________________________

batch_normalization_67 (BatchNo (None, 2, 2, 512) 2048 conv2d_67[0][0]

__________________________________________________________________________________________________

activation_61 (Activation) (None, 2, 2, 512) 0 batch_normalization_67[0][0]

__________________________________________________________________________________________________

conv2d_68 (Conv2D) (None, 2, 2, 512) 2359808 activation_61[0][0]

__________________________________________________________________________________________________

batch_normalization_68 (BatchNo (None, 2, 2, 512) 2048 conv2d_68[0][0]

__________________________________________________________________________________________________

activation_62 (Activation) (None, 2, 2, 512) 0 batch_normalization_68[0][0]

__________________________________________________________________________________________________

conv2d_69 (Conv2D) (None, 2, 2, 2048) 1050624 activation_62[0][0]

__________________________________________________________________________________________________

batch_normalization_69 (BatchNo (None, 2, 2, 2048) 8192 conv2d_69[0][0]

__________________________________________________________________________________________________

add_20 (Add) (None, 2, 2, 2048) 0 activation_60[0][0]

batch_normalization_69[0][0]

__________________________________________________________________________________________________

activation_63 (Activation) (None, 2, 2, 2048) 0 add_20[0][0]

__________________________________________________________________________________________________

conv2d_70 (Conv2D) (None, 2, 2, 512) 1049088 activation_63[0][0]

__________________________________________________________________________________________________

batch_normalization_70 (BatchNo (None, 2, 2, 512) 2048 conv2d_70[0][0]

__________________________________________________________________________________________________

activation_64 (Activation) (None, 2, 2, 512) 0 batch_normalization_70[0][0]

__________________________________________________________________________________________________

conv2d_71 (Conv2D) (None, 2, 2, 512) 2359808 activation_64[0][0]

__________________________________________________________________________________________________

batch_normalization_71 (BatchNo (None, 2, 2, 512) 2048 conv2d_71[0][0]

__________________________________________________________________________________________________

activation_65 (Activation) (None, 2, 2, 512) 0 batch_normalization_71[0][0]

__________________________________________________________________________________________________

conv2d_72 (Conv2D) (None, 2, 2, 2048) 1050624 activation_65[0][0]

__________________________________________________________________________________________________

batch_normalization_72 (BatchNo (None, 2, 2, 2048) 8192 conv2d_72[0][0]

__________________________________________________________________________________________________

add_21 (Add) (None, 2, 2, 2048) 0 activation_63[0][0]

batch_normalization_72[0][0]

__________________________________________________________________________________________________

activation_66 (Activation) (None, 2, 2, 2048) 0 add_21[0][0]

__________________________________________________________________________________________________

average_pooling2d (AveragePooli (None, 1, 1, 2048) 0 activation_66[0][0]

__________________________________________________________________________________________________

flatten (Flatten) (None, 2048) 0 average_pooling2d[0][0]

__________________________________________________________________________________________________

dense (Dense) (None, 6) 12294 flatten[0][0]

==================================================================================================

Total params: 23,600,006

Trainable params: 23,546,886

Non-trainable params: 53,120

__________________________________________________________________________________________________

None

from outputs import ResNet50_summary model = ResNet50(input_shape = (64, 64, 3), classes = 6)

实体化结束,下面我们对模型进行编译

model.compile(optimizer='adam', loss='categorical_crossentropy', metrics=['accuracy'])

接下来我们就是加载数据集并进行训练

X_train_orig, Y_train_orig, X_test_orig, Y_test_orig, classes = load_dataset()

# Normalize image vectors

X_train = X_train_orig / 255.

X_test = X_test_orig / 255.

# Convert training and test labels to one hot matrices

Y_train = convert_to_one_hot(Y_train_orig, 6).T

Y_test = convert_to_one_hot(Y_test_orig, 6).T

print ("number of training examples = " + str(X_train.shape[0]))

print ("number of test examples = " + str(X_test.shape[0]))

print ("X_train shape: " + str(X_train.shape))

print ("Y_train shape: " + str(Y_train.shape))

print ("X_test shape: " + str(X_test.shape))

print ("Y_test shape: " + str(Y_test.shape))

number of training examples = 1080 number of test examples = 120 X_train shape: (1080, 64, 64, 3) Y_train shape: (1080, 6) X_test shape: (120, 64, 64, 3) Y_test shape: (120, 6)

model.fit(X_train, Y_train, epochs = 10, batch_size = 32)

Epoch 1/10 34/34 [==============================] - 10s 129ms/step - loss: 2.2749 - accuracy: 0.4417 Epoch 2/10 34/34 [==============================] - 3s 92ms/step - loss: 1.0135 - accuracy: 0.6889 Epoch 3/10 34/34 [==============================] - 3s 92ms/step - loss: 0.3830 - accuracy: 0.8694 Epoch 4/10 34/34 [==============================] - 3s 91ms/step - loss: 0.2390 - accuracy: 0.9241 Epoch 5/10 34/34 [==============================] - 3s 91ms/step - loss: 0.1527 - accuracy: 0.9519 0s - loss: 0.1 Epoch 6/10 34/34 [==============================] - 3s 91ms/step - loss: 0.1050 - accuracy: 0.9648 Epoch 7/10 34/34 [==============================] - 3s 91ms/step - loss: 0.1824 - accuracy: 0.9444 Epoch 8/10 34/34 [==============================] - 3s 92ms/step - loss: 0.5906 - accuracy: 0.8074 Epoch 9/10 34/34 [==============================] - 3s 91ms/step - loss: 0.4195 - accuracy: 0.8676 Epoch 10/10 34/34 [==============================] - 3s 91ms/step - loss: 0.3377 - accuracy: 0.9194

我们对模型进行评估

preds = model.evaluate(X_test, Y_test)

print ("Loss = " + str(preds[0]))

print ("Test Accuracy = " + str(preds[1]))

4/4 [==============================] - 1s 31ms/step - loss: 0.4844 - accuracy: 0.8417 Loss = 0.4843602478504181 Test Accuracy = 0.8416666388511658

博主已经在手势数据集上训练了自己的RESNET50模型的权重,你可以使用下面的代码载并运行博主的训练模型,

pre_trained_model = tf.keras.models.load_model('resnet50.h5')

然后测试一下博主训练出来的权值:

preds = pre_trained_model.evaluate(X_test, Y_test)

print ("Loss = " + str(preds[0]))

print ("Test Accuracy = " + str(preds[1]))

使用自己的图片做测试

img_path = 'C:/Users/Style/Desktop/kun.png'

img = image.load_img(img_path, target_size=(64, 64))

x = image.img_to_array(img)

x = np.expand_dims(x, axis=0)

x = x/255.0

print('Input image shape:', x.shape)

imshow(img)

prediction = model.predict(x)

print("Class prediction vector [p(0), p(1), p(2), p(3), p(4), p(5)] = ", prediction)

print("Class:", np.argmax(prediction))

Input image shape: (1, 64, 64, 3) Class prediction vector [p(0), p(1), p(2), p(3), p(4), p(5)] = [[0.01301673 0.8742848 0.00662233 0.05449386 0.02079306 0.03078919]] Class: 1

pre_trained_model.summary()

Model: "ResNet50"

__________________________________________________________________________________________________

Layer (type) Output Shape Param # Connected to

==================================================================================================

input_1 (InputLayer) [(None, 64, 64, 3)] 0

__________________________________________________________________________________________________

zero_padding2d_1 (ZeroPadding2D (None, 70, 70, 3) 0 input_1[0][0]

__________________________________________________________________________________________________

conv1 (Conv2D) (None, 32, 32, 64) 9472 zero_padding2d_1[0][0]

__________________________________________________________________________________________________

bn_conv1 (BatchNormalization) (None, 32, 32, 64) 256 conv1[0][0]

__________________________________________________________________________________________________

activation_1 (Activation) (None, 32, 32, 64) 0 bn_conv1[0][0]

__________________________________________________________________________________________________

max_pooling2d_1 (MaxPooling2D) (None, 15, 15, 64) 0 activation_1[0][0]

__________________________________________________________________________________________________

res2a_branch2a (Conv2D) (None, 15, 15, 64) 4160 max_pooling2d_1[0][0]

__________________________________________________________________________________________________

bn2a_branch2a (BatchNormalizati (None, 15, 15, 64) 256 res2a_branch2a[0][0]

__________________________________________________________________________________________________

activation_2 (Activation) (None, 15, 15, 64) 0 bn2a_branch2a[0][0]

__________________________________________________________________________________________________

res2a_branch2b (Conv2D) (None, 15, 15, 64) 36928 activation_2[0][0]

__________________________________________________________________________________________________

bn2a_branch2b (BatchNormalizati (None, 15, 15, 64) 256 res2a_branch2b[0][0]

__________________________________________________________________________________________________

activation_3 (Activation) (None, 15, 15, 64) 0 bn2a_branch2b[0][0]

__________________________________________________________________________________________________

res2a_branch2c (Conv2D) (None, 15, 15, 256) 16640 activation_3[0][0]

__________________________________________________________________________________________________

res2a_branch1 (Conv2D) (None, 15, 15, 256) 16640 max_pooling2d_1[0][0]

__________________________________________________________________________________________________

bn2a_branch2c (BatchNormalizati (None, 15, 15, 256) 1024 res2a_branch2c[0][0]

__________________________________________________________________________________________________

bn2a_branch1 (BatchNormalizatio (None, 15, 15, 256) 1024 res2a_branch1[0][0]

__________________________________________________________________________________________________

add_1 (Add) (None, 15, 15, 256) 0 bn2a_branch2c[0][0]

bn2a_branch1[0][0]

__________________________________________________________________________________________________

activation_4 (Activation) (None, 15, 15, 256) 0 add_1[0][0]

__________________________________________________________________________________________________

res2b_branch2a (Conv2D) (None, 15, 15, 64) 16448 activation_4[0][0]

__________________________________________________________________________________________________

bn2b_branch2a (BatchNormalizati (None, 15, 15, 64) 256 res2b_branch2a[0][0]

__________________________________________________________________________________________________

activation_5 (Activation) (None, 15, 15, 64) 0 bn2b_branch2a[0][0]

__________________________________________________________________________________________________

res2b_branch2b (Conv2D) (None, 15, 15, 64) 36928 activation_5[0][0]

__________________________________________________________________________________________________

bn2b_branch2b (BatchNormalizati (None, 15, 15, 64) 256 res2b_branch2b[0][0]

__________________________________________________________________________________________________

activation_6 (Activation) (None, 15, 15, 64) 0 bn2b_branch2b[0][0]

__________________________________________________________________________________________________

res2b_branch2c (Conv2D) (None, 15, 15, 256) 16640 activation_6[0][0]

__________________________________________________________________________________________________

bn2b_branch2c (BatchNormalizati (None, 15, 15, 256) 1024 res2b_branch2c[0][0]

__________________________________________________________________________________________________

add_2 (Add) (None, 15, 15, 256) 0 bn2b_branch2c[0][0]

activation_4[0][0]

__________________________________________________________________________________________________

activation_7 (Activation) (None, 15, 15, 256) 0 add_2[0][0]

__________________________________________________________________________________________________

res2c_branch2a (Conv2D) (None, 15, 15, 64) 16448 activation_7[0][0]

__________________________________________________________________________________________________

bn2c_branch2a (BatchNormalizati (None, 15, 15, 64) 256 res2c_branch2a[0][0]

__________________________________________________________________________________________________

activation_8 (Activation) (None, 15, 15, 64) 0 bn2c_branch2a[0][0]

__________________________________________________________________________________________________

res2c_branch2b (Conv2D) (None, 15, 15, 64) 36928 activation_8[0][0]

__________________________________________________________________________________________________

bn2c_branch2b (BatchNormalizati (None, 15, 15, 64) 256 res2c_branch2b[0][0]

__________________________________________________________________________________________________

activation_9 (Activation) (None, 15, 15, 64) 0 bn2c_branch2b[0][0]

__________________________________________________________________________________________________

res2c_branch2c (Conv2D) (None, 15, 15, 256) 16640 activation_9[0][0]

__________________________________________________________________________________________________

bn2c_branch2c (BatchNormalizati (None, 15, 15, 256) 1024 res2c_branch2c[0][0]

__________________________________________________________________________________________________

add_3 (Add) (None, 15, 15, 256) 0 bn2c_branch2c[0][0]

activation_7[0][0]

__________________________________________________________________________________________________

activation_10 (Activation) (None, 15, 15, 256) 0 add_3[0][0]

__________________________________________________________________________________________________

res3a_branch2a (Conv2D) (None, 8, 8, 128) 32896 activation_10[0][0]

__________________________________________________________________________________________________

bn3a_branch2a (BatchNormalizati (None, 8, 8, 128) 512 res3a_branch2a[0][0]

__________________________________________________________________________________________________

activation_11 (Activation) (None, 8, 8, 128) 0 bn3a_branch2a[0][0]

__________________________________________________________________________________________________

res3a_branch2b (Conv2D) (None, 8, 8, 128) 147584 activation_11[0][0]

__________________________________________________________________________________________________

bn3a_branch2b (BatchNormalizati (None, 8, 8, 128) 512 res3a_branch2b[0][0]

__________________________________________________________________________________________________

activation_12 (Activation) (None, 8, 8, 128) 0 bn3a_branch2b[0][0]

__________________________________________________________________________________________________

res3a_branch2c (Conv2D) (None, 8, 8, 512) 66048 activation_12[0][0]

__________________________________________________________________________________________________

res3a_branch1 (Conv2D) (None, 8, 8, 512) 131584 activation_10[0][0]

__________________________________________________________________________________________________

bn3a_branch2c (BatchNormalizati (None, 8, 8, 512) 2048 res3a_branch2c[0][0]

__________________________________________________________________________________________________

bn3a_branch1 (BatchNormalizatio (None, 8, 8, 512) 2048 res3a_branch1[0][0]

__________________________________________________________________________________________________

add_4 (Add) (None, 8, 8, 512) 0 bn3a_branch2c[0][0]

bn3a_branch1[0][0]

__________________________________________________________________________________________________

activation_13 (Activation) (None, 8, 8, 512) 0 add_4[0][0]

__________________________________________________________________________________________________

res3b_branch2a (Conv2D) (None, 8, 8, 128) 65664 activation_13[0][0]

__________________________________________________________________________________________________

bn3b_branch2a (BatchNormalizati (None, 8, 8, 128) 512 res3b_branch2a[0][0]

__________________________________________________________________________________________________

activation_14 (Activation) (None, 8, 8, 128) 0 bn3b_branch2a[0][0]

__________________________________________________________________________________________________

res3b_branch2b (Conv2D) (None, 8, 8, 128) 147584 activation_14[0][0]

__________________________________________________________________________________________________

bn3b_branch2b (BatchNormalizati (None, 8, 8, 128) 512 res3b_branch2b[0][0]

__________________________________________________________________________________________________

activation_15 (Activation) (None, 8, 8, 128) 0 bn3b_branch2b[0][0]

__________________________________________________________________________________________________

res3b_branch2c (Conv2D) (None, 8, 8, 512) 66048 activation_15[0][0]

__________________________________________________________________________________________________

bn3b_branch2c (BatchNormalizati (None, 8, 8, 512) 2048 res3b_branch2c[0][0]

__________________________________________________________________________________________________

add_5 (Add) (None, 8, 8, 512) 0 bn3b_branch2c[0][0]

activation_13[0][0]

__________________________________________________________________________________________________

activation_16 (Activation) (None, 8, 8, 512) 0 add_5[0][0]

__________________________________________________________________________________________________

res3c_branch2a (Conv2D) (None, 8, 8, 128) 65664 activation_16[0][0]

__________________________________________________________________________________________________

bn3c_branch2a (BatchNormalizati (None, 8, 8, 128) 512 res3c_branch2a[0][0]

__________________________________________________________________________________________________

activation_17 (Activation) (None, 8, 8, 128) 0 bn3c_branch2a[0][0]

__________________________________________________________________________________________________

res3c_branch2b (Conv2D) (None, 8, 8, 128) 147584 activation_17[0][0]

__________________________________________________________________________________________________

bn3c_branch2b (BatchNormalizati (None, 8, 8, 128) 512 res3c_branch2b[0][0]

__________________________________________________________________________________________________

activation_18 (Activation) (None, 8, 8, 128) 0 bn3c_branch2b[0][0]

__________________________________________________________________________________________________

res3c_branch2c (Conv2D) (None, 8, 8, 512) 66048 activation_18[0][0]

__________________________________________________________________________________________________

bn3c_branch2c (BatchNormalizati (None, 8, 8, 512) 2048 res3c_branch2c[0][0]

__________________________________________________________________________________________________

add_6 (Add) (None, 8, 8, 512) 0 bn3c_branch2c[0][0]

activation_16[0][0]

__________________________________________________________________________________________________

activation_19 (Activation) (None, 8, 8, 512) 0 add_6[0][0]

__________________________________________________________________________________________________

res3d_branch2a (Conv2D) (None, 8, 8, 128) 65664 activation_19[0][0]

__________________________________________________________________________________________________

bn3d_branch2a (BatchNormalizati (None, 8, 8, 128) 512 res3d_branch2a[0][0]

__________________________________________________________________________________________________

activation_20 (Activation) (None, 8, 8, 128) 0 bn3d_branch2a[0][0]

__________________________________________________________________________________________________

res3d_branch2b (Conv2D) (None, 8, 8, 128) 147584 activation_20[0][0]

__________________________________________________________________________________________________

bn3d_branch2b (BatchNormalizati (None, 8, 8, 128) 512 res3d_branch2b[0][0]

__________________________________________________________________________________________________

activation_21 (Activation) (None, 8, 8, 128) 0 bn3d_branch2b[0][0]

__________________________________________________________________________________________________

res3d_branch2c (Conv2D) (None, 8, 8, 512) 66048 activation_21[0][0]

__________________________________________________________________________________________________

bn3d_branch2c (BatchNormalizati (None, 8, 8, 512) 2048 res3d_branch2c[0][0]

__________________________________________________________________________________________________

add_7 (Add) (None, 8, 8, 512) 0 bn3d_branch2c[0][0]

activation_19[0][0]

__________________________________________________________________________________________________

activation_22 (Activation) (None, 8, 8, 512) 0 add_7[0][0]

__________________________________________________________________________________________________

res4a_branch2a (Conv2D) (None, 4, 4, 256) 131328 activation_22[0][0]

__________________________________________________________________________________________________

bn4a_branch2a (BatchNormalizati (None, 4, 4, 256) 1024 res4a_branch2a[0][0]

__________________________________________________________________________________________________

activation_23 (Activation) (None, 4, 4, 256) 0 bn4a_branch2a[0][0]

__________________________________________________________________________________________________

res4a_branch2b (Conv2D) (None, 4, 4, 256) 590080 activation_23[0][0]

__________________________________________________________________________________________________

bn4a_branch2b (BatchNormalizati (None, 4, 4, 256) 1024 res4a_branch2b[0][0]

__________________________________________________________________________________________________

activation_24 (Activation) (None, 4, 4, 256) 0 bn4a_branch2b[0][0]

__________________________________________________________________________________________________

res4a_branch2c (Conv2D) (None, 4, 4, 1024) 263168 activation_24[0][0]

__________________________________________________________________________________________________

res4a_branch1 (Conv2D) (None, 4, 4, 1024) 525312 activation_22[0][0]

__________________________________________________________________________________________________

bn4a_branch2c (BatchNormalizati (None, 4, 4, 1024) 4096 res4a_branch2c[0][0]

__________________________________________________________________________________________________

bn4a_branch1 (BatchNormalizatio (None, 4, 4, 1024) 4096 res4a_branch1[0][0]

__________________________________________________________________________________________________

add_8 (Add) (None, 4, 4, 1024) 0 bn4a_branch2c[0][0]

bn4a_branch1[0][0]

__________________________________________________________________________________________________

activation_25 (Activation) (None, 4, 4, 1024) 0 add_8[0][0]

__________________________________________________________________________________________________

res4b_branch2a (Conv2D) (None, 4, 4, 256) 262400 activation_25[0][0]

__________________________________________________________________________________________________

bn4b_branch2a (BatchNormalizati (None, 4, 4, 256) 1024 res4b_branch2a[0][0]

__________________________________________________________________________________________________

activation_26 (Activation) (None, 4, 4, 256) 0 bn4b_branch2a[0][0]

__________________________________________________________________________________________________

res4b_branch2b (Conv2D) (None, 4, 4, 256) 590080 activation_26[0][0]

__________________________________________________________________________________________________

bn4b_branch2b (BatchNormalizati (None, 4, 4, 256) 1024 res4b_branch2b[0][0]

__________________________________________________________________________________________________

activation_27 (Activation) (None, 4, 4, 256) 0 bn4b_branch2b[0][0]

__________________________________________________________________________________________________

res4b_branch2c (Conv2D) (None, 4, 4, 1024) 263168 activation_27[0][0]

__________________________________________________________________________________________________

bn4b_branch2c (BatchNormalizati (None, 4, 4, 1024) 4096 res4b_branch2c[0][0]

__________________________________________________________________________________________________

add_9 (Add) (None, 4, 4, 1024) 0 bn4b_branch2c[0][0]

activation_25[0][0]

__________________________________________________________________________________________________

activation_28 (Activation) (None, 4, 4, 1024) 0 add_9[0][0]

__________________________________________________________________________________________________

res4c_branch2a (Conv2D) (None, 4, 4, 256) 262400 activation_28[0][0]

__________________________________________________________________________________________________

bn4c_branch2a (BatchNormalizati (None, 4, 4, 256) 1024 res4c_branch2a[0][0]

__________________________________________________________________________________________________

activation_29 (Activation) (None, 4, 4, 256) 0 bn4c_branch2a[0][0]

__________________________________________________________________________________________________

res4c_branch2b (Conv2D) (None, 4, 4, 256) 590080 activation_29[0][0]

__________________________________________________________________________________________________

bn4c_branch2b (BatchNormalizati (None, 4, 4, 256) 1024 res4c_branch2b[0][0]

__________________________________________________________________________________________________

activation_30 (Activation) (None, 4, 4, 256) 0 bn4c_branch2b[0][0]

__________________________________________________________________________________________________

res4c_branch2c (Conv2D) (None, 4, 4, 1024) 263168 activation_30[0][0]

__________________________________________________________________________________________________

bn4c_branch2c (BatchNormalizati (None, 4, 4, 1024) 4096 res4c_branch2c[0][0]

__________________________________________________________________________________________________

add_10 (Add) (None, 4, 4, 1024) 0 bn4c_branch2c[0][0]

activation_28[0][0]

__________________________________________________________________________________________________

activation_31 (Activation) (None, 4, 4, 1024) 0 add_10[0][0]

__________________________________________________________________________________________________

res4d_branch2a (Conv2D) (None, 4, 4, 256) 262400 activation_31[0][0]

__________________________________________________________________________________________________

bn4d_branch2a (BatchNormalizati (None, 4, 4, 256) 1024 res4d_branch2a[0][0]

__________________________________________________________________________________________________

activation_32 (Activation) (None, 4, 4, 256) 0 bn4d_branch2a[0][0]

__________________________________________________________________________________________________

res4d_branch2b (Conv2D) (None, 4, 4, 256) 590080 activation_32[0][0]

__________________________________________________________________________________________________

bn4d_branch2b (BatchNormalizati (None, 4, 4, 256) 1024 res4d_branch2b[0][0]

__________________________________________________________________________________________________

activation_33 (Activation) (None, 4, 4, 256) 0 bn4d_branch2b[0][0]

__________________________________________________________________________________________________

res4d_branch2c (Conv2D) (None, 4, 4, 1024) 263168 activation_33[0][0]

__________________________________________________________________________________________________

bn4d_branch2c (BatchNormalizati (None, 4, 4, 1024) 4096 res4d_branch2c[0][0]

__________________________________________________________________________________________________

add_11 (Add) (None, 4, 4, 1024) 0 bn4d_branch2c[0][0]

activation_31[0][0]

__________________________________________________________________________________________________

activation_34 (Activation) (None, 4, 4, 1024) 0 add_11[0][0]

__________________________________________________________________________________________________

res4e_branch2a (Conv2D) (None, 4, 4, 256) 262400 activation_34[0][0]

__________________________________________________________________________________________________

bn4e_branch2a (BatchNormalizati (None, 4, 4, 256) 1024 res4e_branch2a[0][0]

__________________________________________________________________________________________________

activation_35 (Activation) (None, 4, 4, 256) 0 bn4e_branch2a[0][0]

__________________________________________________________________________________________________

res4e_branch2b (Conv2D) (None, 4, 4, 256) 590080 activation_35[0][0]

__________________________________________________________________________________________________

bn4e_branch2b (BatchNormalizati (None, 4, 4, 256) 1024 res4e_branch2b[0][0]

__________________________________________________________________________________________________

activation_36 (Activation) (None, 4, 4, 256) 0 bn4e_branch2b[0][0]

__________________________________________________________________________________________________

res4e_branch2c (Conv2D) (None, 4, 4, 1024) 263168 activation_36[0][0]

__________________________________________________________________________________________________

bn4e_branch2c (BatchNormalizati (None, 4, 4, 1024) 4096 res4e_branch2c[0][0]

__________________________________________________________________________________________________

add_12 (Add) (None, 4, 4, 1024) 0 bn4e_branch2c[0][0]

activation_34[0][0]

__________________________________________________________________________________________________

activation_37 (Activation) (None, 4, 4, 1024) 0 add_12[0][0]

__________________________________________________________________________________________________

res4f_branch2a (Conv2D) (None, 4, 4, 256) 262400 activation_37[0][0]

__________________________________________________________________________________________________

bn4f_branch2a (BatchNormalizati (None, 4, 4, 256) 1024 res4f_branch2a[0][0]

__________________________________________________________________________________________________

activation_38 (Activation) (None, 4, 4, 256) 0 bn4f_branch2a[0][0]

__________________________________________________________________________________________________

res4f_branch2b (Conv2D) (None, 4, 4, 256) 590080 activation_38[0][0]

__________________________________________________________________________________________________

bn4f_branch2b (BatchNormalizati (None, 4, 4, 256) 1024 res4f_branch2b[0][0]

__________________________________________________________________________________________________

activation_39 (Activation) (None, 4, 4, 256) 0 bn4f_branch2b[0][0]

__________________________________________________________________________________________________

res4f_branch2c (Conv2D) (None, 4, 4, 1024) 263168 activation_39[0][0]

__________________________________________________________________________________________________

bn4f_branch2c (BatchNormalizati (None, 4, 4, 1024) 4096 res4f_branch2c[0][0]

__________________________________________________________________________________________________

add_13 (Add) (None, 4, 4, 1024) 0 bn4f_branch2c[0][0]

activation_37[0][0]

__________________________________________________________________________________________________

activation_40 (Activation) (None, 4, 4, 1024) 0 add_13[0][0]

__________________________________________________________________________________________________

res5a_branch2a (Conv2D) (None, 2, 2, 512) 524800 activation_40[0][0]

__________________________________________________________________________________________________

bn5a_branch2a (BatchNormalizati (None, 2, 2, 512) 2048 res5a_branch2a[0][0]

__________________________________________________________________________________________________

activation_41 (Activation) (None, 2, 2, 512) 0 bn5a_branch2a[0][0]

__________________________________________________________________________________________________

res5a_branch2b (Conv2D) (None, 2, 2, 512) 2359808 activation_41[0][0]

__________________________________________________________________________________________________

bn5a_branch2b (BatchNormalizati (None, 2, 2, 512) 2048 res5a_branch2b[0][0]

__________________________________________________________________________________________________

activation_42 (Activation) (None, 2, 2, 512) 0 bn5a_branch2b[0][0]

__________________________________________________________________________________________________

res5a_branch2c (Conv2D) (None, 2, 2, 2048) 1050624 activation_42[0][0]

__________________________________________________________________________________________________

res5a_branch1 (Conv2D) (None, 2, 2, 2048) 2099200 activation_40[0][0]

__________________________________________________________________________________________________

bn5a_branch2c (BatchNormalizati (None, 2, 2, 2048) 8192 res5a_branch2c[0][0]

__________________________________________________________________________________________________

bn5a_branch1 (BatchNormalizatio (None, 2, 2, 2048) 8192 res5a_branch1[0][0]

__________________________________________________________________________________________________

add_14 (Add) (None, 2, 2, 2048) 0 bn5a_branch2c[0][0]

bn5a_branch1[0][0]

__________________________________________________________________________________________________

activation_43 (Activation) (None, 2, 2, 2048) 0 add_14[0][0]

__________________________________________________________________________________________________

res5b_branch2a (Conv2D) (None, 2, 2, 512) 1049088 activation_43[0][0]

__________________________________________________________________________________________________

bn5b_branch2a (BatchNormalizati (None, 2, 2, 512) 2048 res5b_branch2a[0][0]

__________________________________________________________________________________________________

activation_44 (Activation) (None, 2, 2, 512) 0 bn5b_branch2a[0][0]

__________________________________________________________________________________________________

res5b_branch2b (Conv2D) (None, 2, 2, 512) 2359808 activation_44[0][0]

__________________________________________________________________________________________________

bn5b_branch2b (BatchNormalizati (None, 2, 2, 512) 2048 res5b_branch2b[0][0]

__________________________________________________________________________________________________

activation_45 (Activation) (None, 2, 2, 512) 0 bn5b_branch2b[0][0]

__________________________________________________________________________________________________

res5b_branch2c (Conv2D) (None, 2, 2, 2048) 1050624 activation_45[0][0]

__________________________________________________________________________________________________

bn5b_branch2c (BatchNormalizati (None, 2, 2, 2048) 8192 res5b_branch2c[0][0]

__________________________________________________________________________________________________

add_15 (Add) (None, 2, 2, 2048) 0 bn5b_branch2c[0][0]

activation_43[0][0]

__________________________________________________________________________________________________

activation_46 (Activation) (None, 2, 2, 2048) 0 add_15[0][0]

__________________________________________________________________________________________________

res5c_branch2a (Conv2D) (None, 2, 2, 512) 1049088 activation_46[0][0]

__________________________________________________________________________________________________