Mitigating hate speech online using AI

source link: https://uxdesign.cc/case-study-mitigating-hate-speech-online-using-ai-204b555a91d7

Go to the source link to view the article. You can view the picture content, updated content and better typesetting reading experience. If the link is broken, please click the button below to view the snapshot at that time.

Mitigating hate speech online using AI

Employing AI to reduce the emotional toll of combating online hate speech.

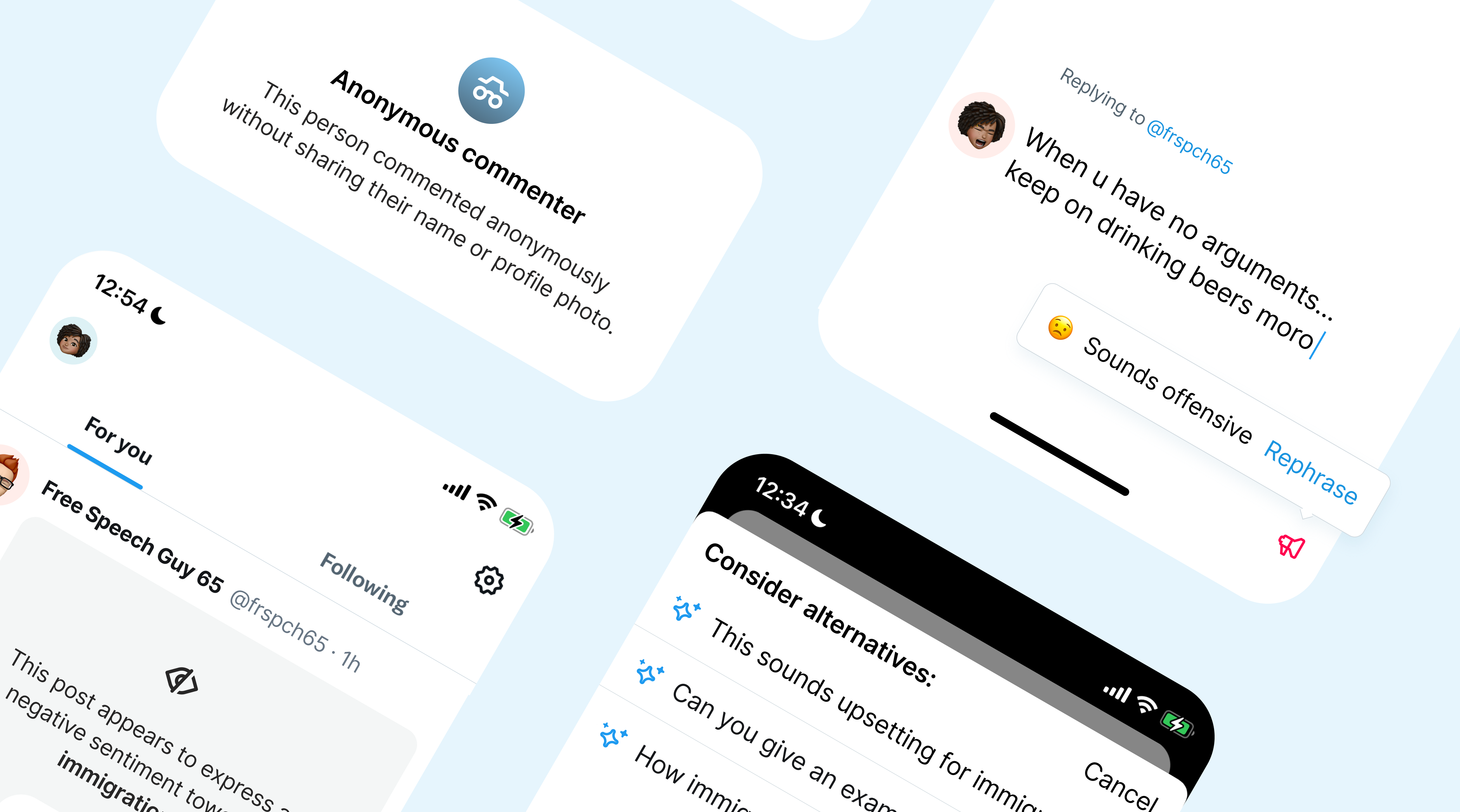

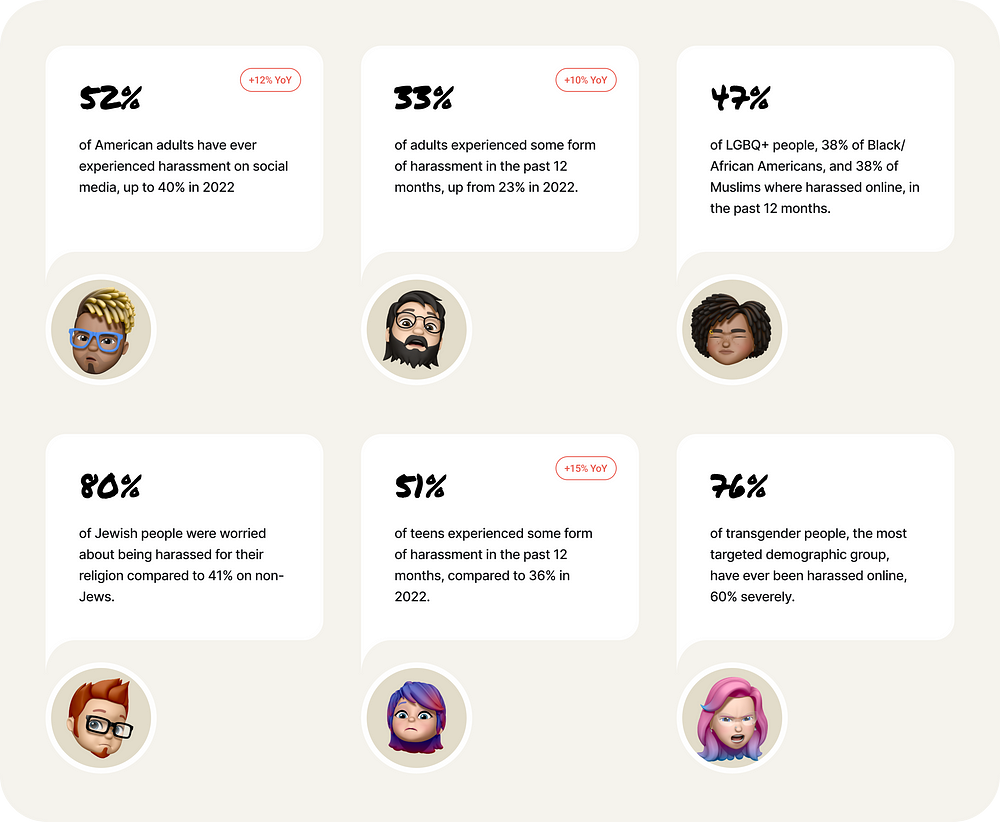

Data reported by the US [3] [4] [5] [6], the UK [7], and European countries [8] indicate that in recent years, online hate speech has exploded, and hate-related crimes are at a record high.

Most of the hate speech still occurs on mainstream social media platforms.

Despite tech companies’ commitments to making their platforms safe, hate speech continues to find its way onto major platforms [9] [10] [11].

![Top 3 platforms on which respondents experienced online abuse (percentages — multiple answers). [9], p.24](https://miro.medium.com/v2/resize:fit:700/1*TdXDGAsecSiXYWtkz6VJHQ.png)

During the COVID-19 pandemic, there was a spike in hate speech targeting women, with the majority of the abuse occurring on mainstream social media platforms like Twitter (Current X), Facebook, Instagram, WhatsApp, Slack, and Snapchat.

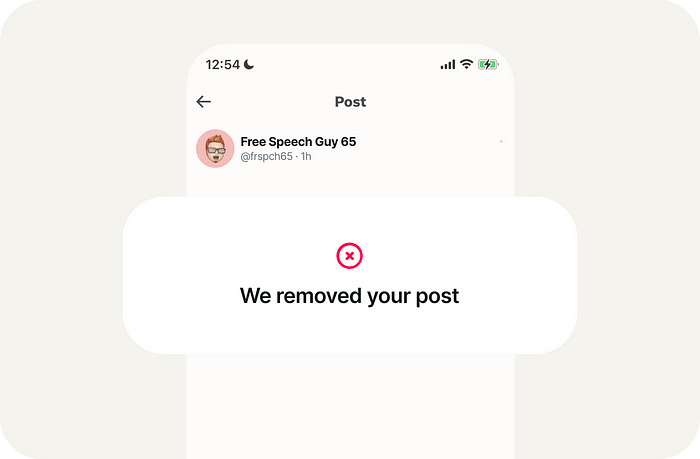

Social media platforms claim to take action, but there are caveats.

Social platforms report record numbers in the removal of hate speech [12], yet their commitments are not always consistent [13] [14] [15].

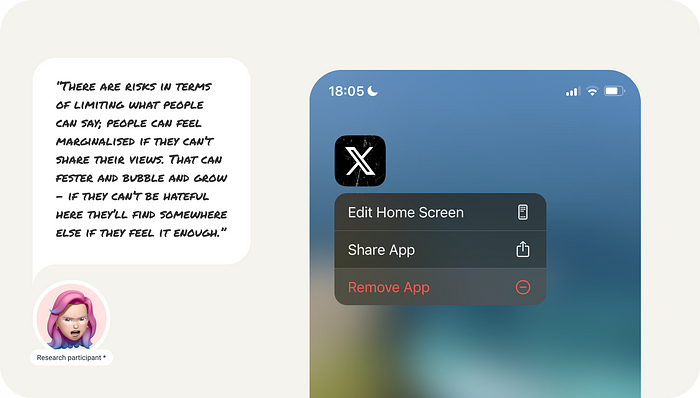

In addition, content removal serves to fracture and polarize the internet [16] [17]. As the UN Secretary-General puts it, ‘Social media provides a global megaphone for hate’ [18].

Taking down content hardly changes the minds of people who spread hate.

Straightforward moderation hardly changes the minds of those who speak hatefully [19]. Instead, they might ‘go underground’, sharing hate speech in other online places [20] [21].

The shooter who attacked a synagogue in Pittsburgh used Gab to share antisemitic hate speech and hinted at his plans before he killed eleven people and injured six more [22].

Research indicates empathetic responses can be used to alter offender actions.

Counter-speech messages that generate empathy for hate speech victims are more likely to convince senders to change their ways [23] [24] [25].

Researchers discovered that messages sparking empathy were 2.5 times more effective than humor or warnings of consequences in getting authors to remove offensive comments.

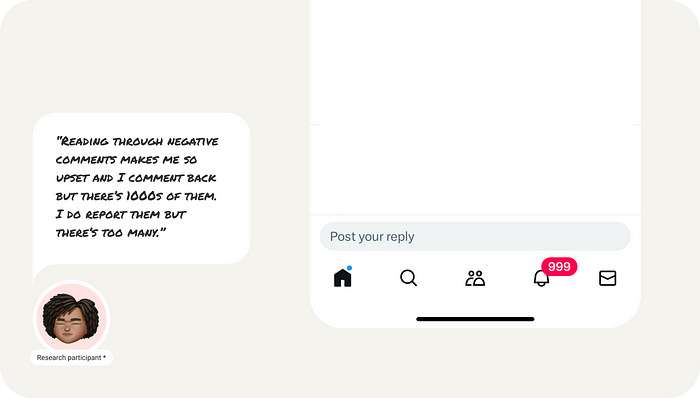

Although empathic counter-speech can be effective, it has its downsides.

The main reason people are hesitant to engage in counter-speech is that it can be emotionally taxing and potentially risky [24].

Counter-speakers often become targets of online attacks themselves, and being empathic towards offenders makes it even harder.

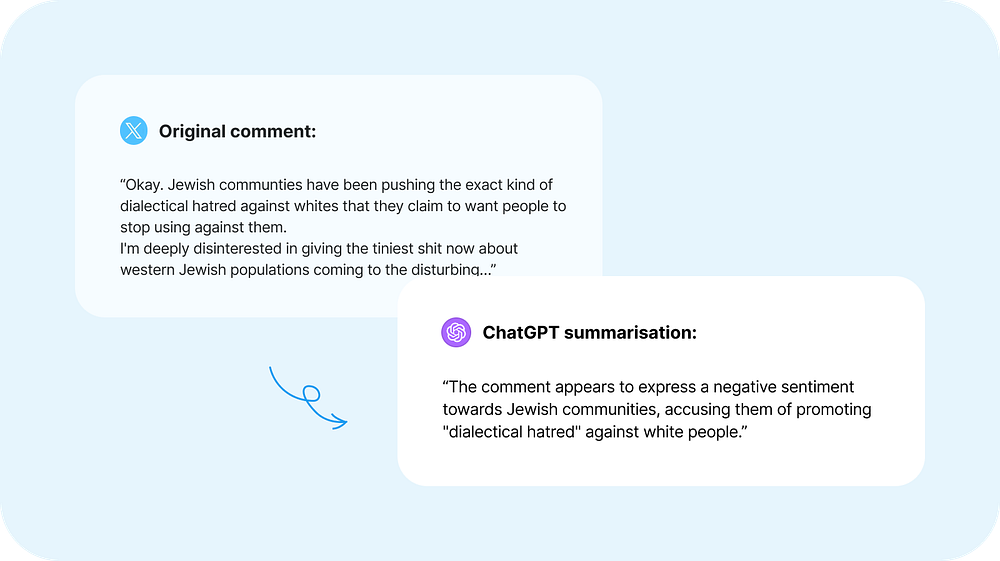

Current LLMs are capable of eliminating the downsides associated with empathic responses.

LLMs like ChatGPT can understand the language semantics of comments and provide context for hate speech.

They can also understand human biases involved and generate empathic replies for effective counter-speech.

How can social media platforms use LLMs to mitigate hate speech?

There are a few aspects that social media platforms can address to help reduce the downsides associated with empathic responses.

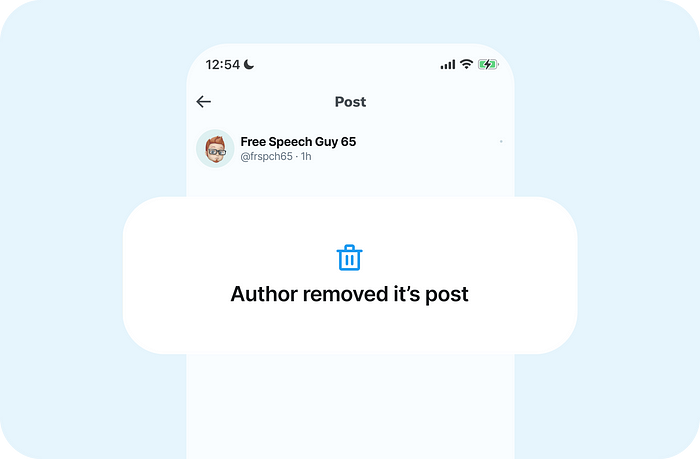

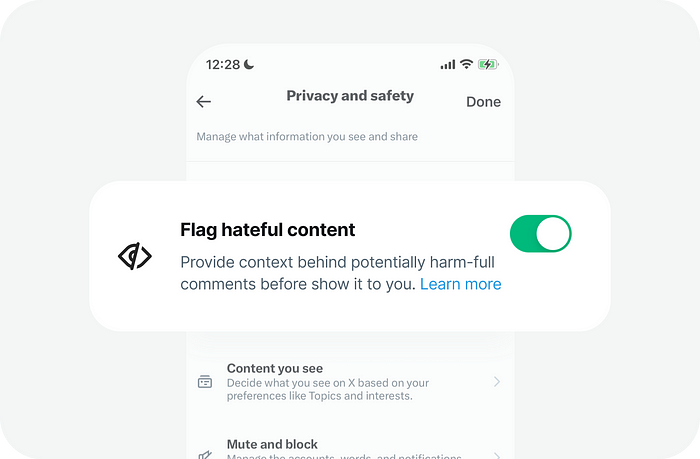

Make combating hate speech less emotionally taxing.

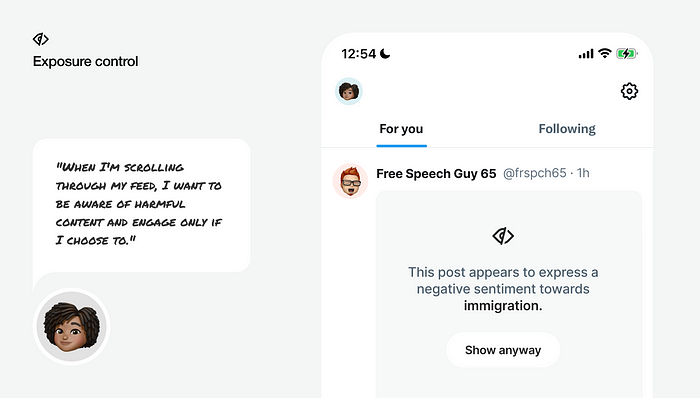

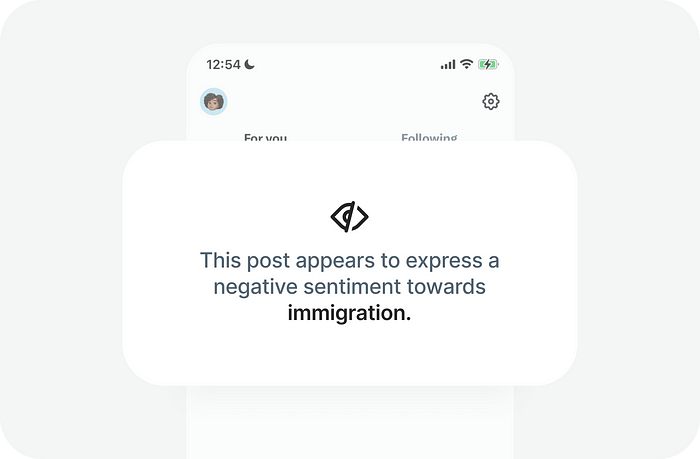

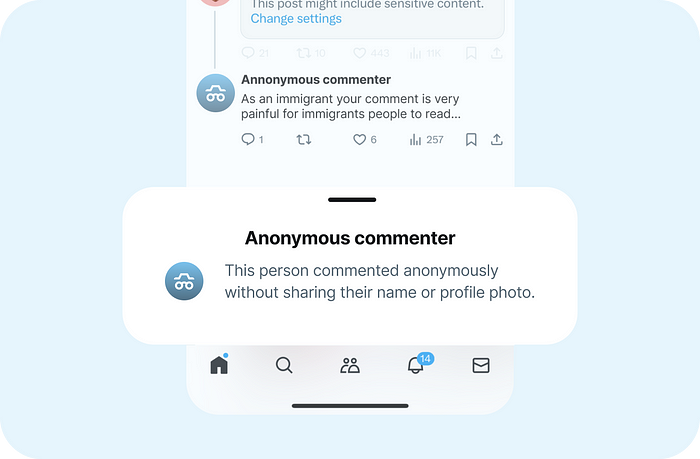

There is a substantial number of people willing to combat hate speech online. Social platforms can support these individuals by flagging instances of hate speech.

Big social media platforms already place sensitive content, including nudity, violence, and other sensitive topics, behind a content warning wall, but this does not extend to hate speech.

Providing context for harmful content can protect people from excessive exposure to hate speech and help them choose their battles more wisely.

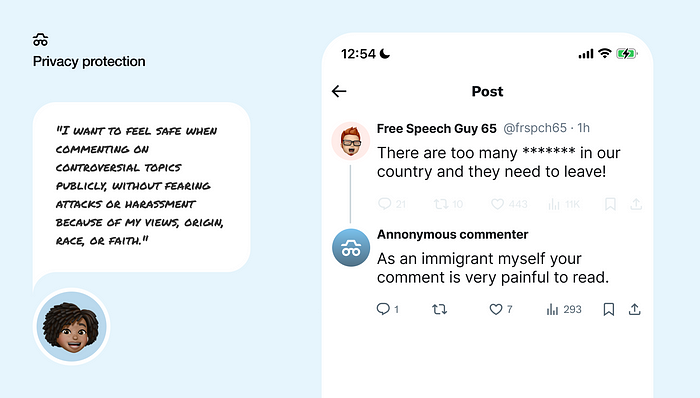

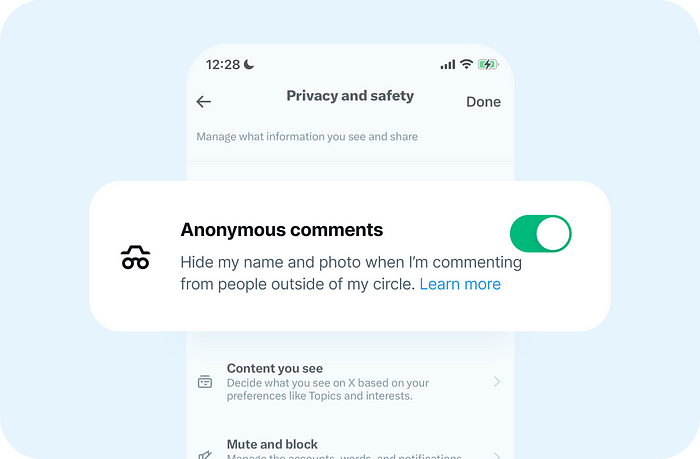

Protect people who engage and speak up.

Furthermore, people might be more inclined to join efforts in combating hate speech if they feel safe while commenting on it.

Social media platforms like Facebook already implement features to protect personal information [26]. They could take a further step by extending these policies to combat hate speech.

Hiding personal information from people outside of your circle, especially when commenting on hot topics, might protect individuals from vulnerable social groups and empower them to speak out.

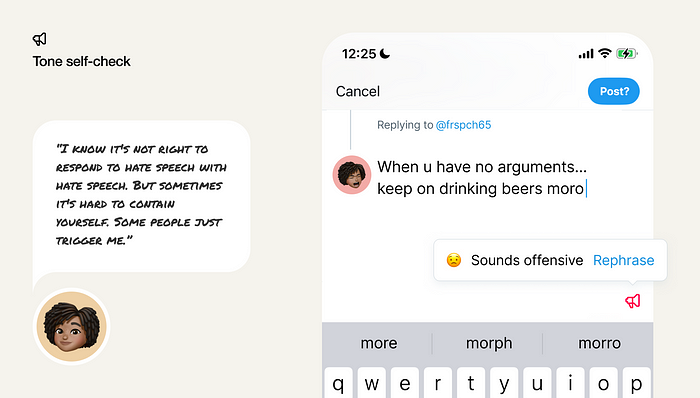

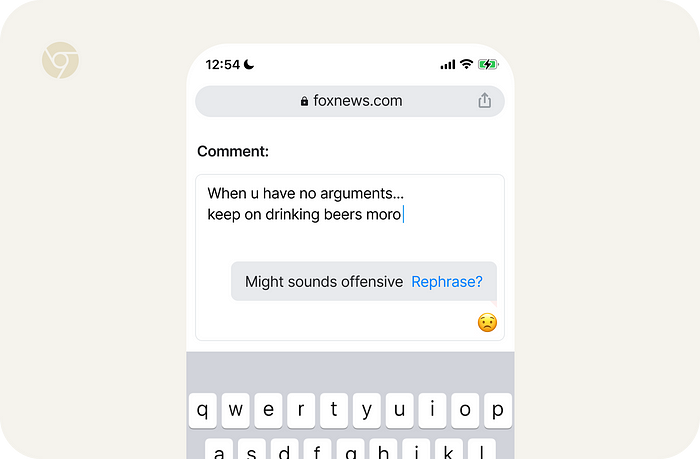

Provide guard rails for emotional swings.

People often use hate speech to relieve tension and frustration [27 p.5]. The Gallup Global Emotions Report showed that one in three people worldwide felt daily pain in 2023 [28]. Apparently we all can participate in hate speech time after time.

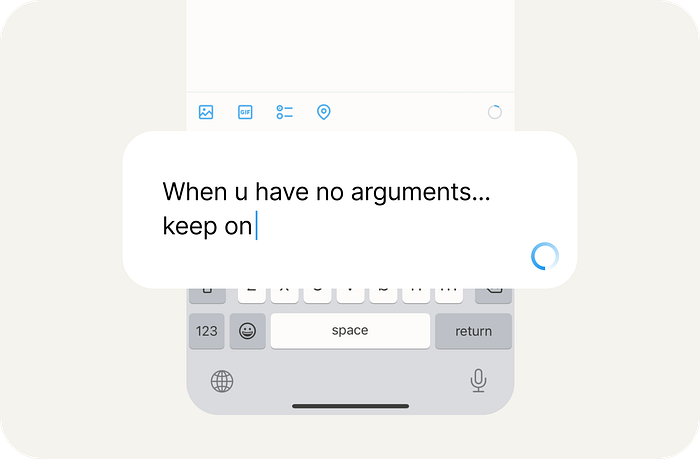

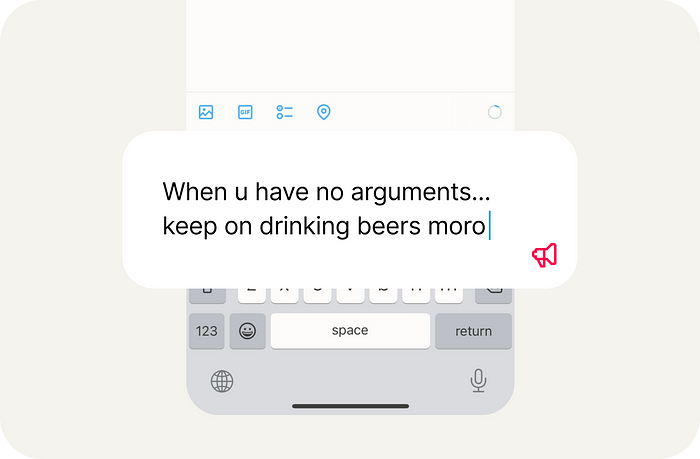

Companies like Grammarly can analyze message sentiment in real-time [29], an approach that social media platforms could adopt to help prevent hate speech.

Automatically checking sentiment while typing a post or reply can help individuals in emotional states avoid posting hateful or inappropriate comments.

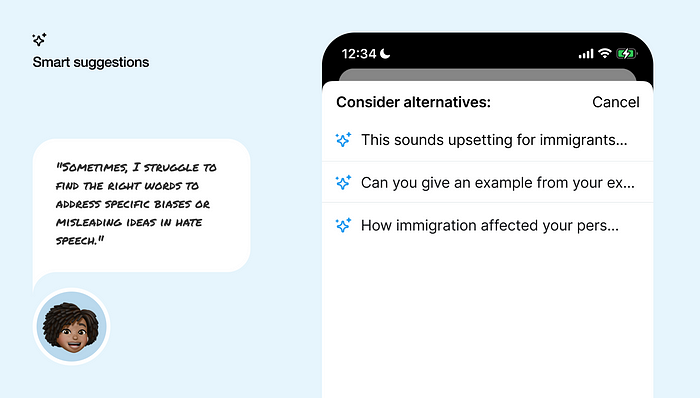

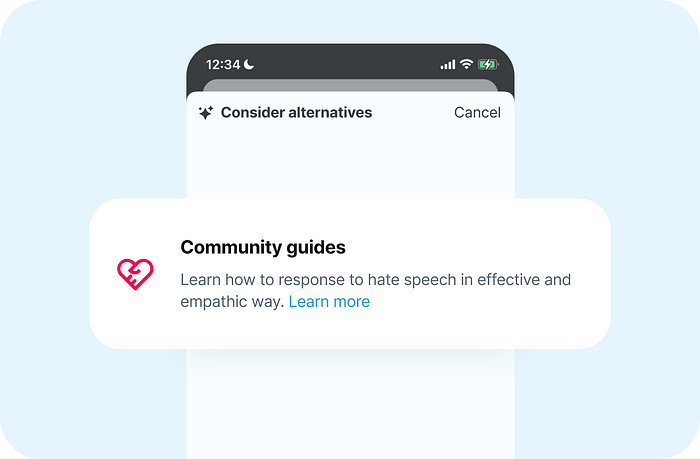

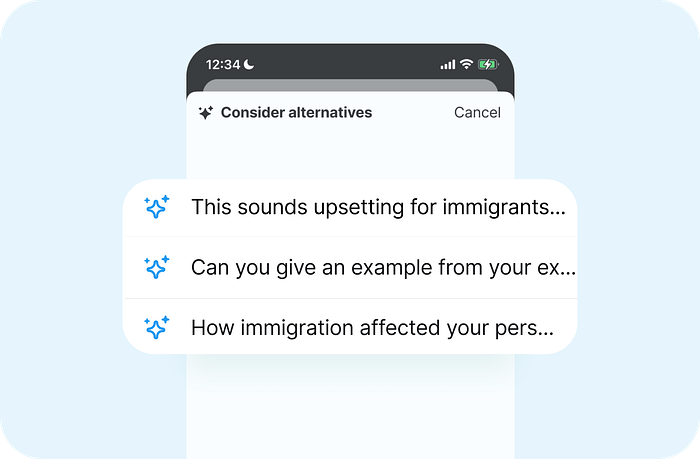

Help people counter-speech effectively.

If there were tools to simplify and enhance responses to hateful posts or comments, more people might be encouraged to join the fight against hate speech.

Social platforms offer resources and guidance to users encountering individuals with self-harm and suicidal thoughts [30], yet similar support is not extended for instances of hate speech [31].

By offering comprehensive guides and suggestions generated by (LLMs), social media platforms can help people know how to act when they face hate speech and engage effectively [32].

Counter-speech beyond social platforms.

The use of counter-speech could be extended to modern browsers and operating systems (OS).

Browser settings or browser extension

Browsers such as Safari, Chrome, and Brave could incorporate built-in features or extensions enabling users to self-check their communication on platforms beyond mainstream social media, including comments on tabloids and news websites.

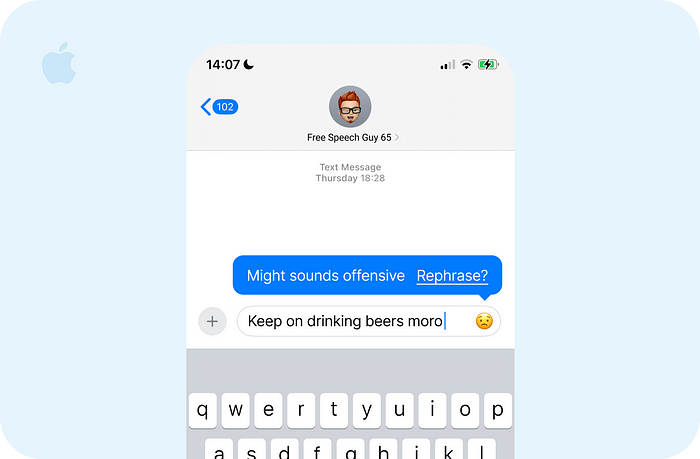

Mobile and desktop platforms

iOS, along with its sensitive content feature, could introduce a function to flag hate speech in iMessage or while browsing the web in Safari. Users could control this feature directly from their device settings or through social platform preferences, utilizing portable LLMs for support. [33]

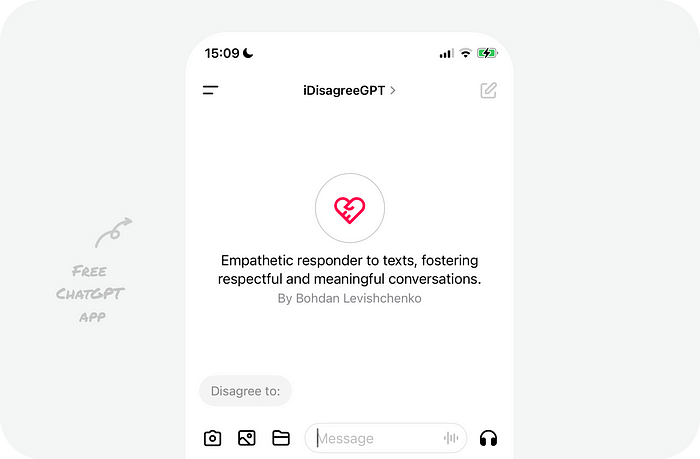

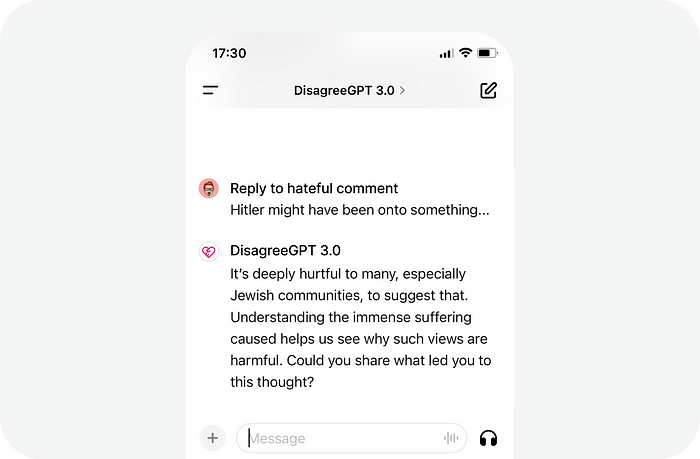

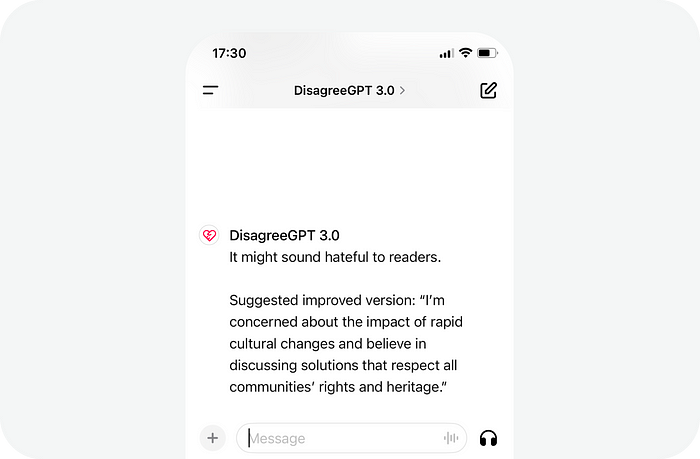

Counter-speech app.

To demonstrate the concept, I’ve constructed a custom ChatGPT. This version can assist users in self-checking their messages or crafting responses with empathy to hateful comments.

It uses UN guidelines for how to debate hate speech and knows human cognitive biases that are often present in hate speech.

You just need to provide the text you’re about to send or the hateful comment you would like to push back on.

Recommend

About Joyk

Aggregate valuable and interesting links.

Joyk means Joy of geeK