Trying Node.js Test Runner

source link: https://glebbahmutov.com/blog/trying-node-test-runner/

Go to the source link to view the article. You can view the picture content, updated content and better typesetting reading experience. If the link is broken, please click the button below to view the snapshot at that time.

In March of 2022 Node.js got a new built-in test runner via node:test module. I have evaluated the test runner and made several presentations showing its features and comparing the new built-in test runner with the other test runners like Ava, Jest, and Mocha. You can flip through the slides below or keep reading.

History #

Node.js has appeared in 2009 and for the first 13 did not have a built-in test runner. This gave rise to a number of 3rd party test runners

| Test runner | Current major version | Age (years) |

|---|---|---|

| mocha | v10 | 11 |

| tap | v16 | 11 |

| tape | v5 | 10 |

| ava | v5 | 9 |

| jest | v27 | 7 |

I even wrote my own test runner 11 year ago: gt

I personally think Node.js follows the same "minimal core" as JavaScript itself. Thus things like linters, code formatters, and test runners are better made as 3rd party tools. While this was a good idea for a long time, now any language without a standard testing tool seems weird. Deno, Rust, Go - they all have their own built-in test runners.

Currently my favorite JavaScript testing stack is Ava for Node and Cypress for the web testing.

Node test runner #

finally in Node v18 a new built-in test runner was announced.

Node v18 test runner announcement

It was marked as experimental, and already less than a year later was marked as stable in Node v20.

Node test runner marked stable

From the first announcement on April 19th 2022 to the stable version announcement on March 8th 2023 it took less than a year. I think this is precisely because there are so many good 3rd party test runners that the node:test could quickly implement the features that the testing community needs and that were validated by mocha, ava, jest, and others.

The test module #

🎁 All source and test examples I show in this blog post can be found in my bahmutov/node-tests repo.

The new node:test module adds two things:

- The new

testfunction to write your tests

import test from 'node:test'

- The

--testcommand line flag in Node itself

# run all tests

$ node --test tests/*.mjs

Tip: if a JavaScript source file imports from node:test you can execute the tests even without the --test CLI flag.

The node:test works great with another built-in Node module that has been around for a long time node:assert

import test from 'node:test'

import assert from 'node:assert/strict'

test('hello', () => {

const message = 'Hello'

assert.equal(message, 'Hello', 'checking the greeting')

})

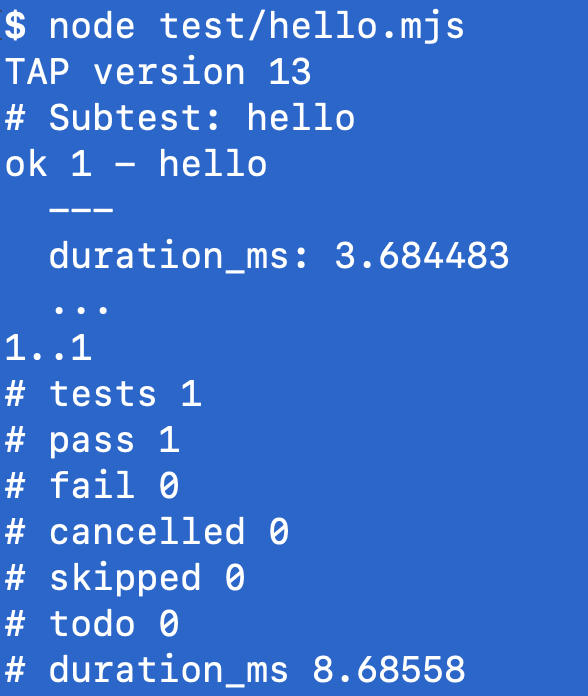

Run the hello test

Installation #

Having a built-in node:test module saves time downloading and installing a 3rd party module and its dependencies. All you need is to have the right Node version. The test module has been back-ported to other Node.js versions. I used Node v19 to evaluate the test runner, and all I needed to "install" it was to say "nvm install" because I use nvm tool on my machine.

$ nvm ls-remote

$ nvm install 19

Downloading and installing node v19.6.0...

Downloading https://nodejs.org/dist/v19.6.0/...

############################################## 100.0%

Computing checksum with shasum -a 256

Checksums matched!

Now using node v19.6.0 (npm v9.4.0)

Other test runners all download and use 100s of NPM dependencies

| Test runner | NPM packages installed |

|---|---|

| Mocha | 78 |

| Mocha + Chai + Sinon | 445 |

| Ava | 198 |

| Jest | 429 |

Installing dependencies takes time. For example, time how long it takes to add Jest:

$ npm i -D jest

added 429 packages, and audited 430 packages in 10s

Test execution #

Let's find and run all tests

$ node --test

# runs tests in "test" subfolder

Or run tests in a JS file that imports from node:test

$ node tests/demo.mjs

Running tests works great with the new built-in Node watch mode

$ node --watch tests/demo.mjs

$ node --watch --test tests/*.mjs

Node test runner can find all test specs following a naming convention. Given the following folder structure with a mixture of source files and test files:

tests/

names/

another.test.mjs

my_test.mjs

test-1.mjs

utils.mjs

You can run all tests using the command

$ node --test tests/names

- tests/names/another.test.mjs

- tests/names/my_test.mjs

- tests/names/test-1.mjs

In summary, any source file that starts or ends its name with "test" will be considered a spec file with tests.

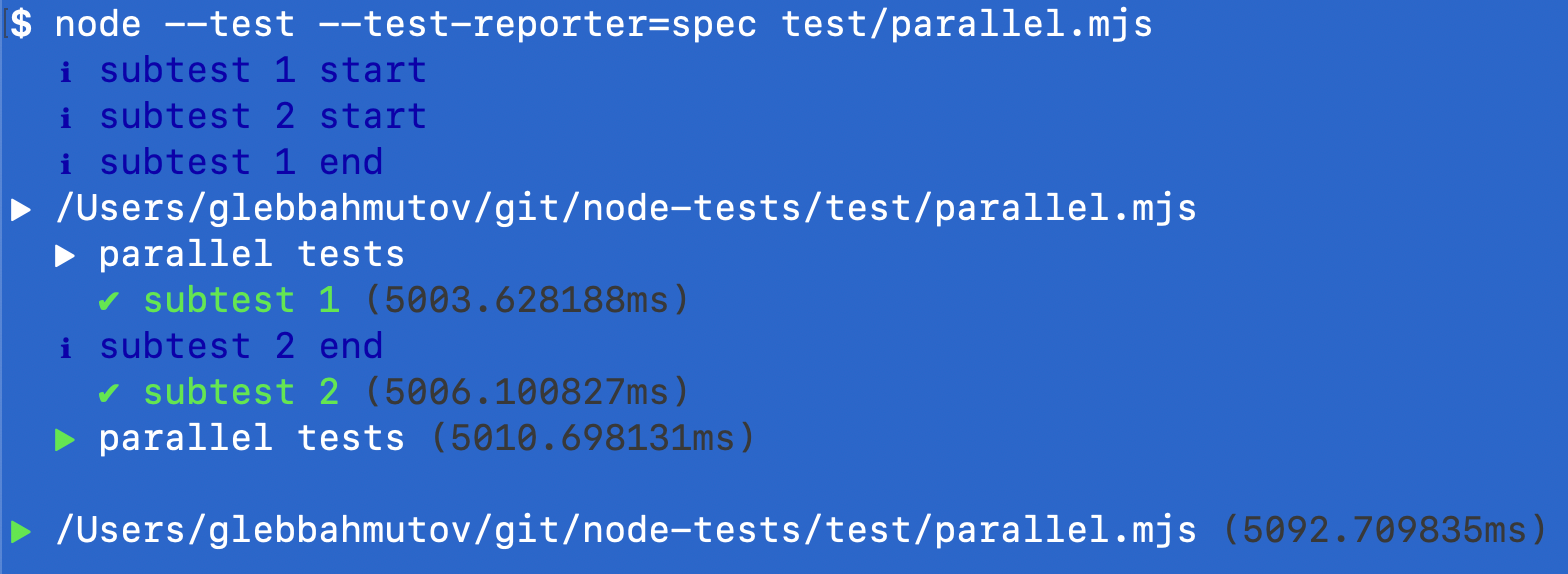

Parallel tests #

You can explicitly mark tests to run in parallel using concurrency parameter

describe('parallel tests', { concurrency: true }, () => {

it('subtest 1', async () => {

console.log('subtest 1 start')

await delay(5000)

console.log('subtest 1 end')

})

it('subtest 2', async () => {

console.log('subtest 2 start')

await delay(5000)

console.log('subtest 2 end')

})

})

The above two tests will run in parallel and finish in 5 seconds (since each takes 5 seconds to run)

The two tests ran in parallel

The test syntax #

The tests use modern async/await syntax, and you can nest tests inside other tests, and even create new tests dynamically

import test from 'node:test'

import assert from 'node:assert/strict'

test('top level test', async (t) => {

await t.test('subtest 1', (t) => {

assert.strictEqual(1, 1)

})

await t.test('subtest 2', (t) => {

assert.strictEqual(2, 2)

})

})

Note: be careful with making syntax mistakes. For example omitting the t in the nested t.test call will lead to very confusing errors.

BDD syntax

I prefer to nest tests using suites of tests with describe and it callbacks. They are included in the node:test module.

import { describe, it } from 'node:test'

import assert from 'node:assert/strict'

describe('top level test', () => {

it('subtest 1', () => {

assert.strictEqual(1, 1)

})

it('subtest 2', () => {

assert.strictEqual(2, 2)

})

})

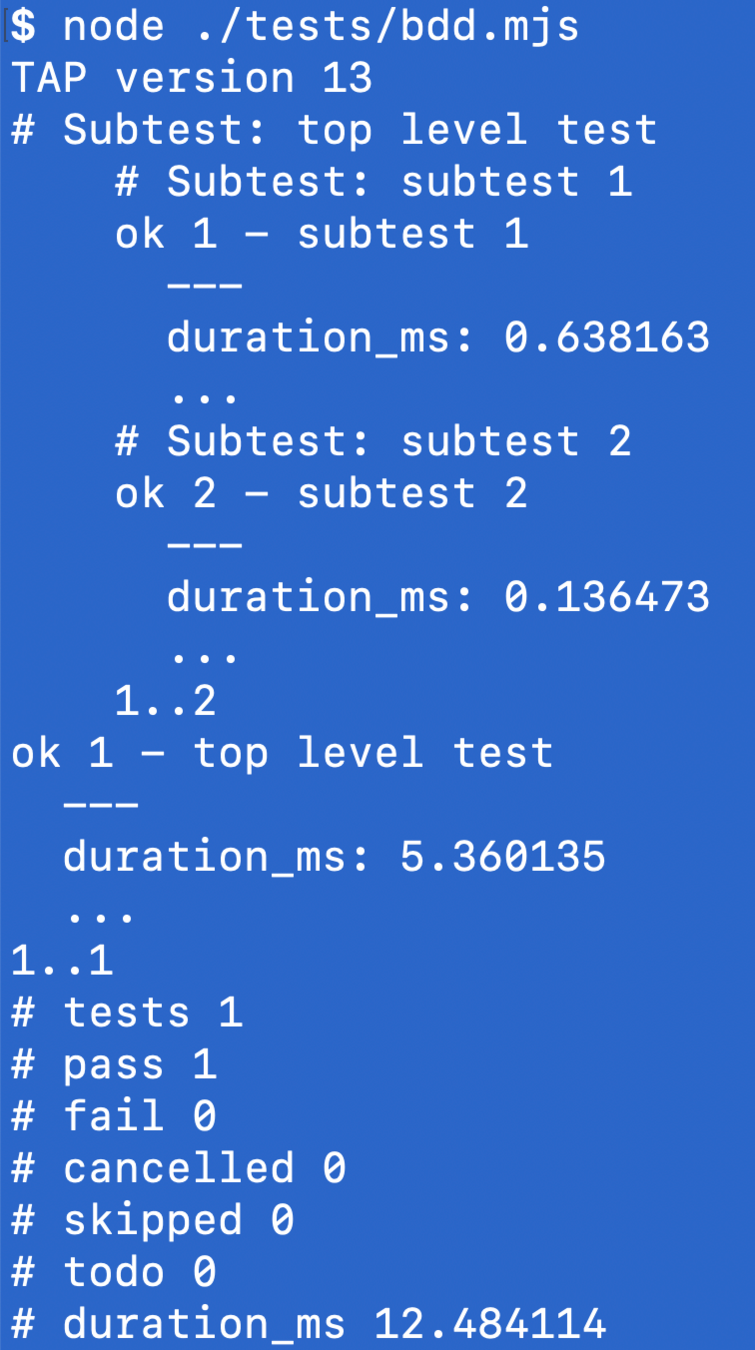

By default Node test runner uses TAP output, and it considers the above test to have just 1 top-level test. Which looks confusing honestly, since I would consider "subtest 1" and "subtest 2" to be the real tests.

TAP reports a single top-level test

Test reporters #

By default, node:test can generate TAP, spec, and dot reports

$ node --test --test-reporter tap # default

$ node --test --test-reporter spec

$ node --test --test-reporter dot

You can generate several reports, just need to redirect each one to a different output stream

$ node --test --test-reporter dot \

--test-reporter-destination stdout \

--test-reporter spec \

--test-reporter-destination out.txt

Tip: make sure to specify all test runner options before listing the spec files or folders

# 🚨 DOES NOT WORK

$ node test/hello.mjs --test-reporter spec

# ✅ WORKS

$ node --test-reporter spec test/hello.mjs

I would consider putting the test command into the package.json scripts

{

"scripts": {

"test": "node --test",

"spec": "node --test --test-reporter spec"

}

}

The TAP output protocol is widely used and you can pipe it into 3rd party reporters, for example into faucet

# npm i -D faucet

$ node --test | npx faucet

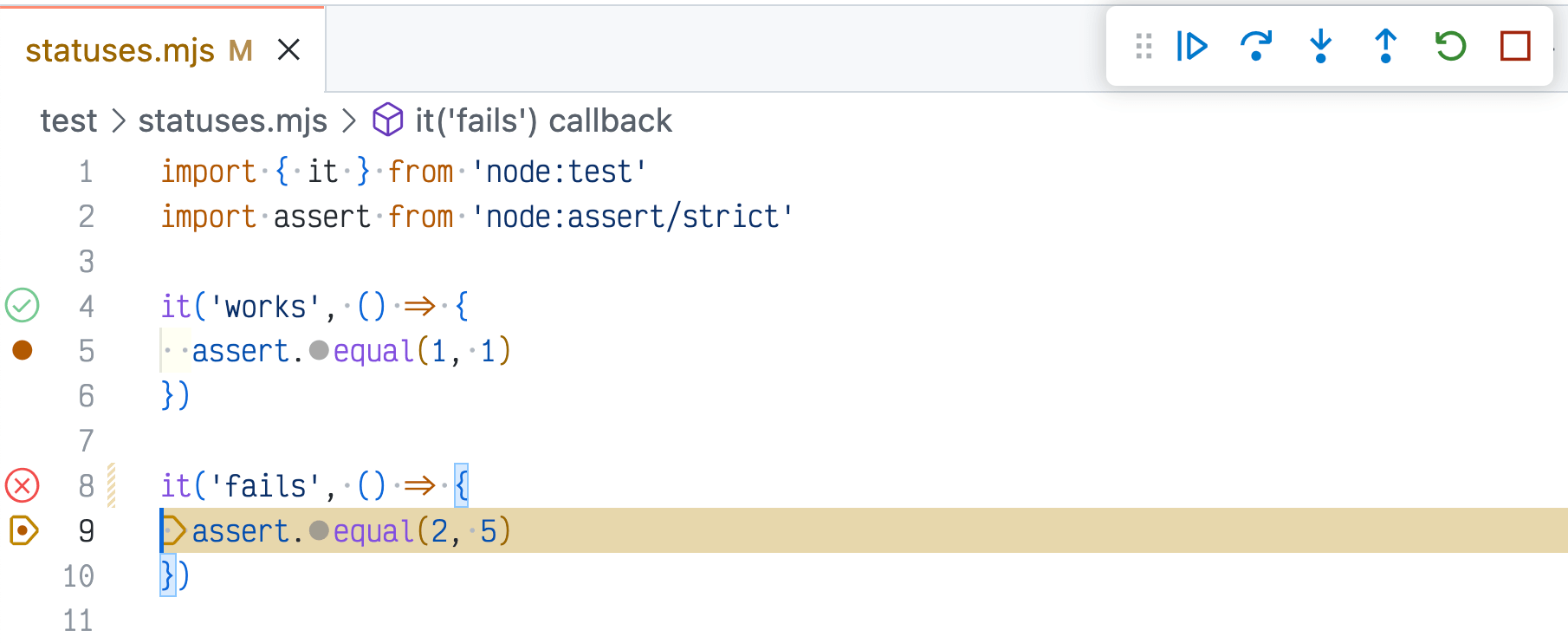

Test statuses #

Node test runner has 5 test statuses

it('works', () => {

assert.equal(1, 1)

})

it('fails', () => {

assert.equal(2, 5)

})

If the above tests finish, the test summary will show:

i tests 2

i pass 1

i fail 1

i cancelled 0

i skipped 0

i todo 0

The "pass" and "fail" are obvious. The first test passes, the second one has a failing assertion assert.equal(2, 5) so the test fails. What are the "cancelled", "skipped", and "todo" statuses?

Let's look at the "todo" and "skipped" tests

it.todo('loads data')

// SKIP: <issue link>

it.skip('stopped working', () => {

assert.equal(2, 5)

})

The "todo" tests are the ones we plan to implement. The skipped tests are the ones that we have, but they are failing for some reason, so we disabled them to investigate. I would advise to add a comment with a GitHub / Jira issue link above the skipped tests to communicate to everyone why the test is skipped.

The "cancelled" tests are special. Consider the following test file where the before hook throws an error

before(() => {

console.log('before hook')

throw new Error('Setup fails')

})

it('works', () => {

assert.equal(1, 1)

})

it('works again', () => {

assert.equal(1, 1)

})

The tests works and works again did not even execute - because the before hook failed. Thus these tests were cancelled.

Tip: it is fun to compare Node test statuses to Cypress Test Statuses.

Assertions #

By default you can use node:test by throwing your errors or by using the built-in node:assert module

import assert from 'node:assert/strict'

assert.ok(truthy, message)

assert.equal(value, expected, ...)

assert.deepEqual(...)

assert.match(value, regexp)

assert.throws(fn)

assert.rejects(asyncFn)

// plus "assertion.notX..."

The number of built-in assertions is quite small compared to the assertion libraries like Chai or built into Jest. Anything more complicated, like checking property inside an object is unavailable

expect({ /* object */ }).to.have.property(x)

expect({ /* object */ }).to.have.keys([x, y, z])

expect({ /* large object */ })

.to.deep.include({ ... known properties })

Of course, you can use Chai with node:test

// import assert from 'node:assert/strict'

import { assert } from 'chai'

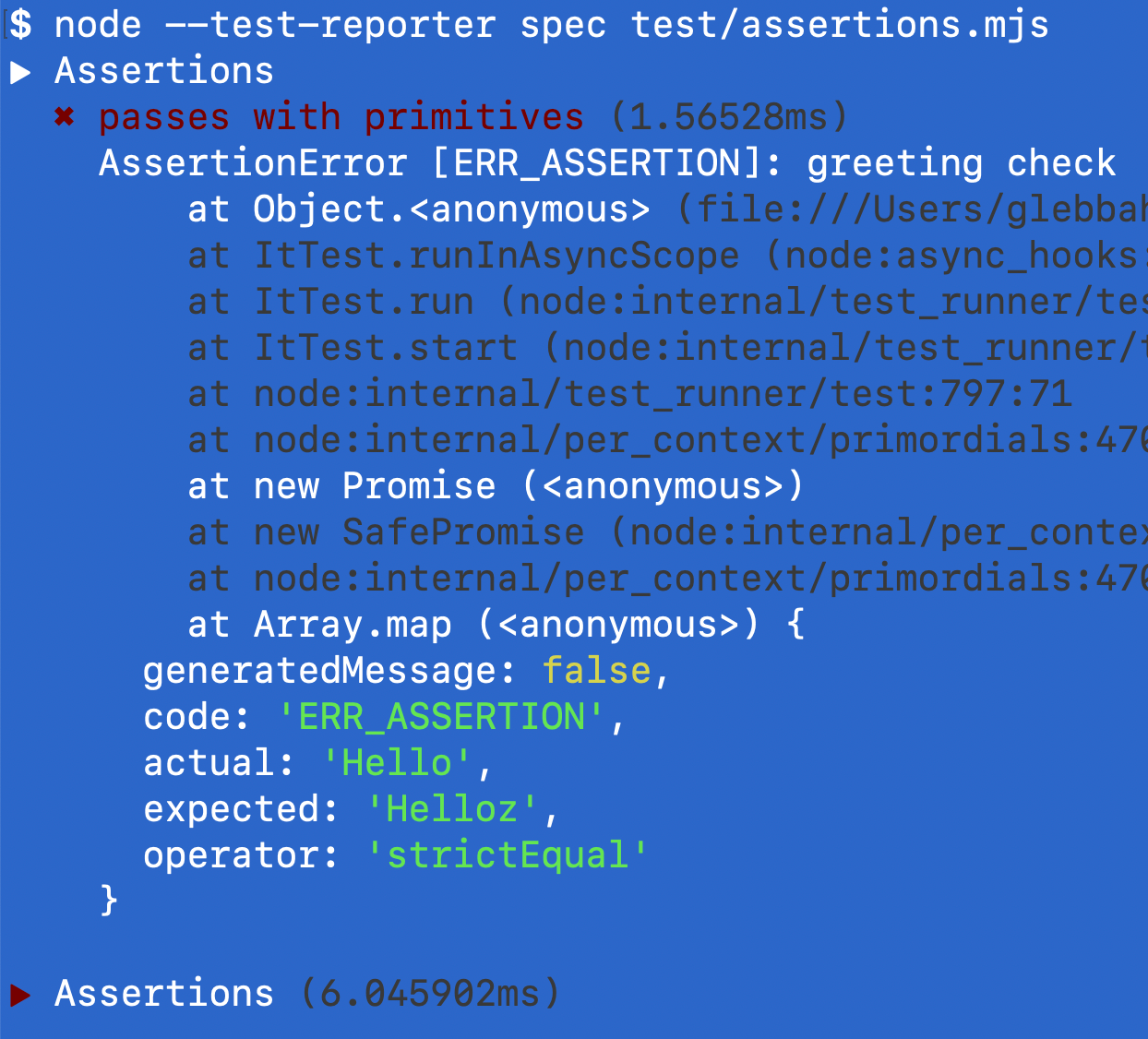

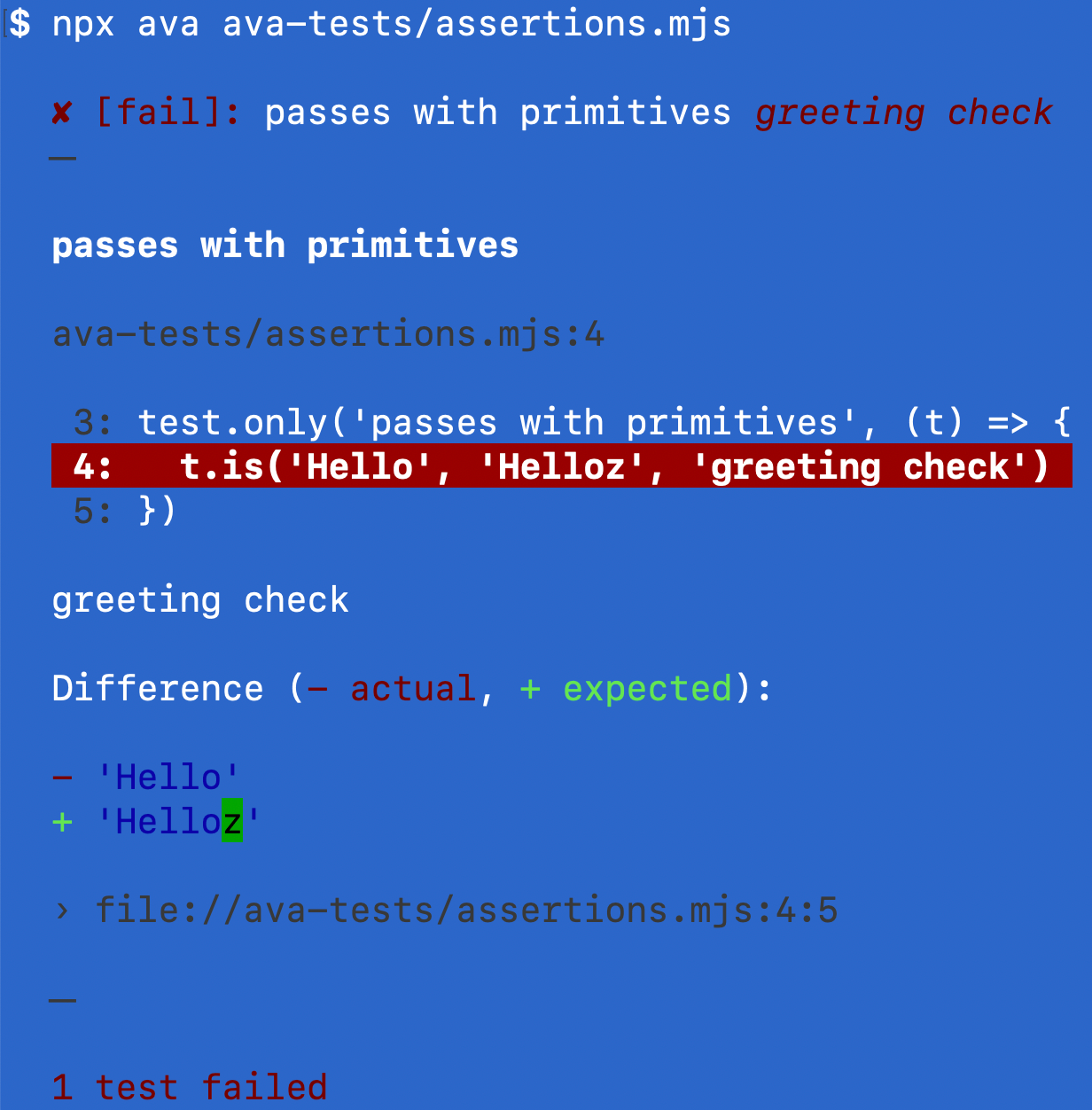

What I feel is missing still are good and helpful error messages when an assertion fails. For example, lets compare two strings that are different by a single character: Hello and Helloz

import { describe, it } from 'node:test'

import assert from 'node:assert/strict'

describe('Assertions', () => {

it('passes with primitives', () => {

assert.equal('Hello', 'Helloz', 'greeting check')

})

})

Here is the error shown in the terminal

The string difference error

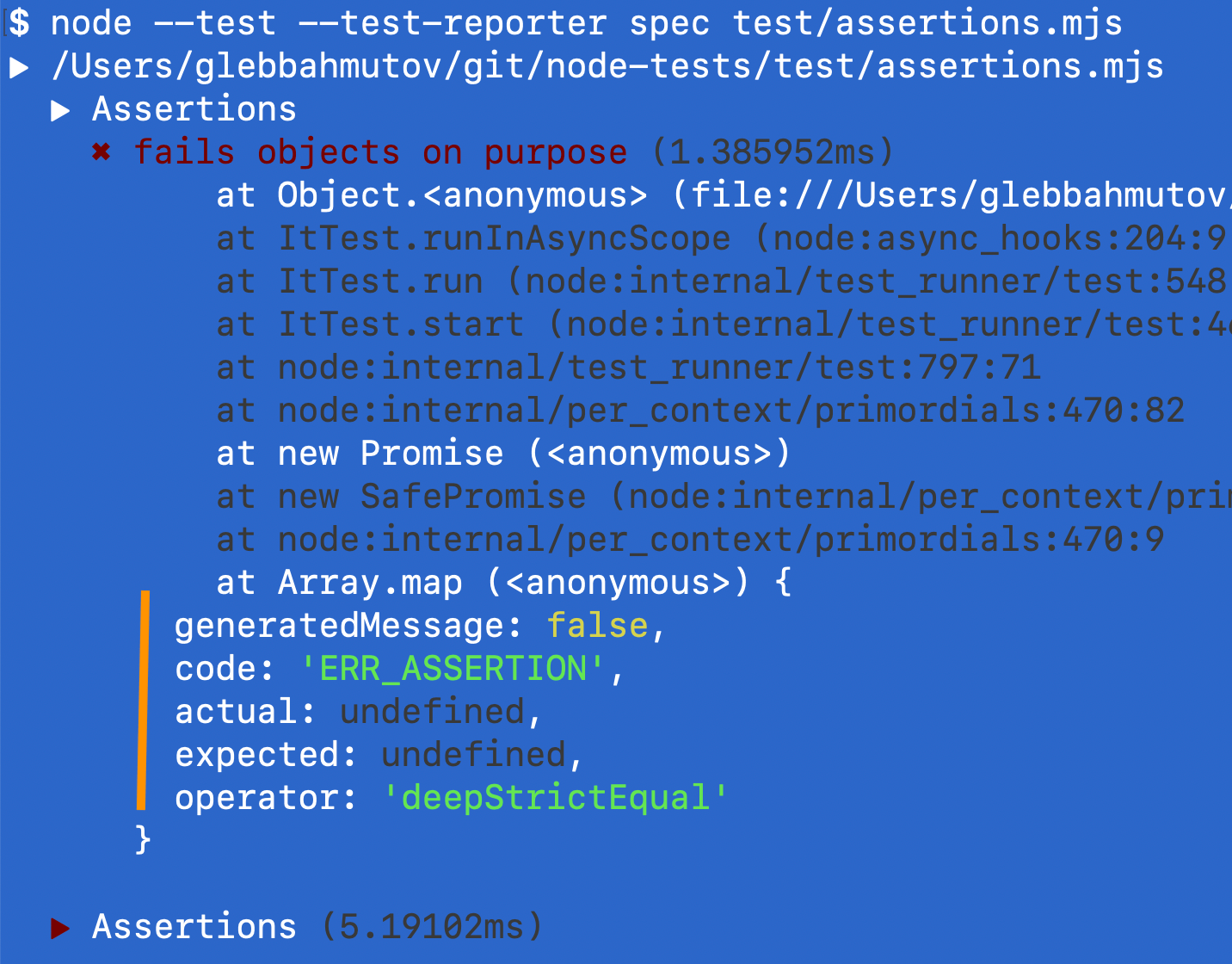

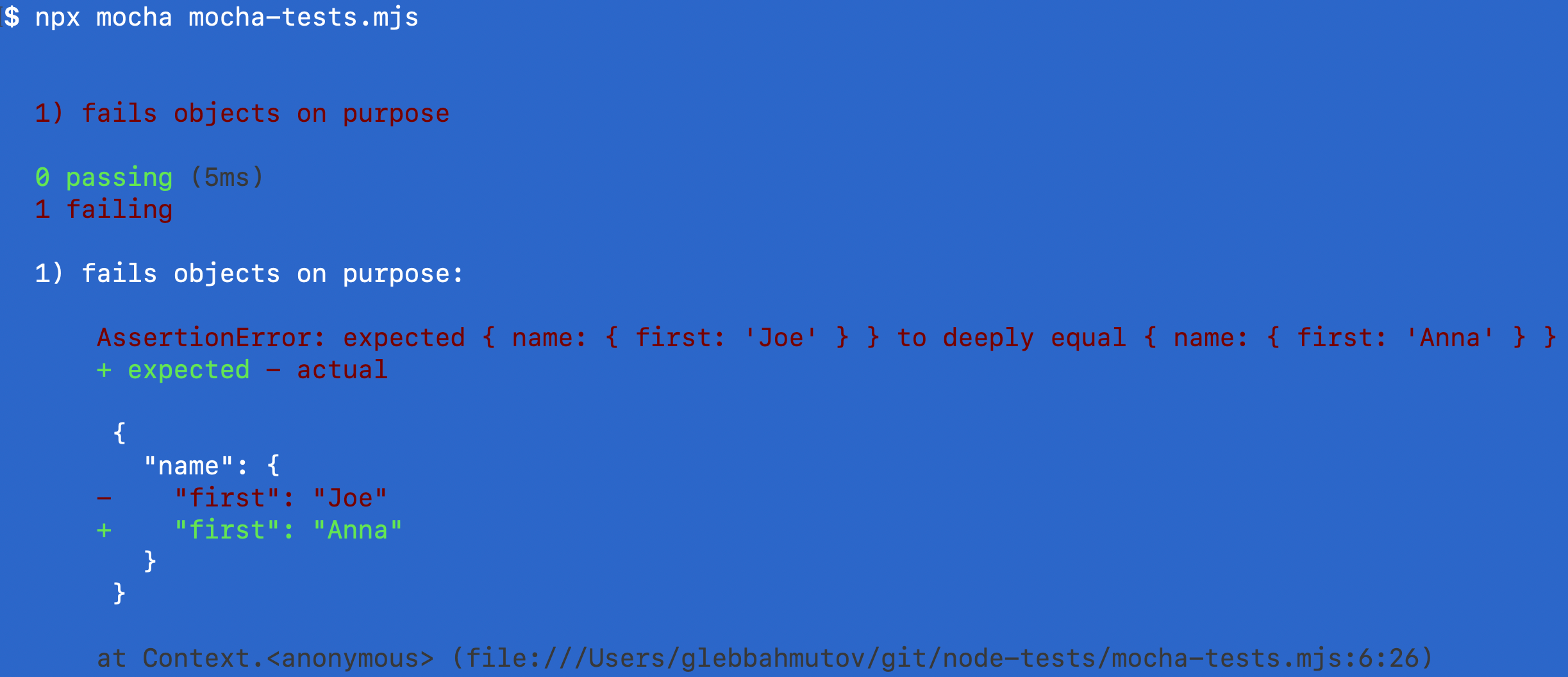

Now let's compare two objects that have different nested property

it('fails objects on purpose', () => {

const person = { name: { first: 'Joe' } }

assert.deepEqual(person,

{ name: { first: 'Anna' } }, 'people')

})

The terminal error is not terribly helpful in this case

The object difference error

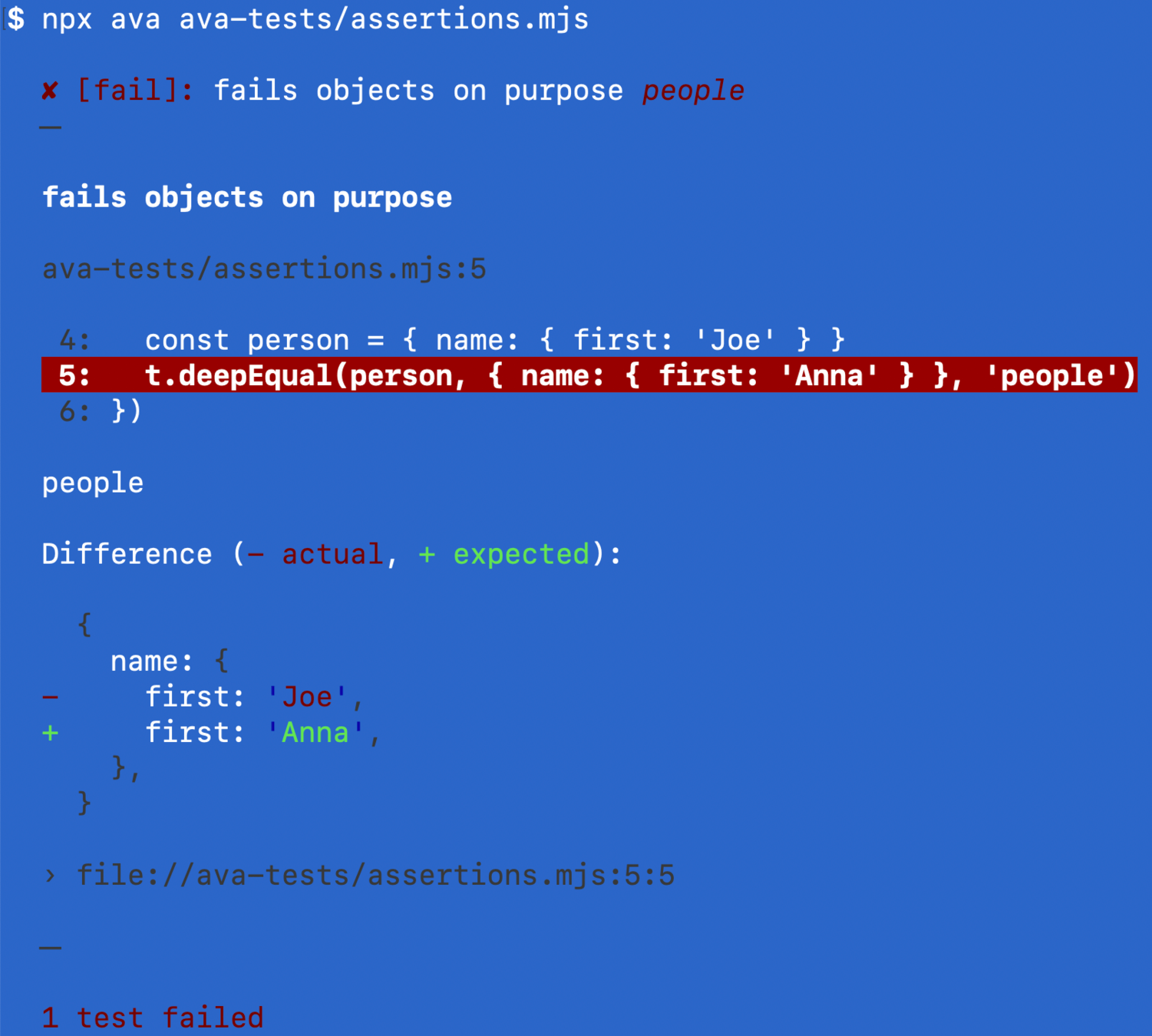

Let's take the same test and use Ava test runner and see the error message

import test from 'ava'

test('fails objects on purpose', (t) => {

const person = { name: { first: 'Joe' } }

t.deepEqual(person,

{ name: { first: 'Anna' } }, 'people')

})

Ava test runner has detailed error messages

Ava shows great errors and the string difference when comparing two strings

import test from 'ava'

test('passes with primitives', (t) => {

t.is('Hello', 'Helloz', 'greeting check')

})

Ava test runner shows color-coded string differences

Mocha test runner + Chai assertion library shows object differences intelligently

import { it } from 'mocha'

import { expect } from 'chai'

it('fails objects on purpose', (t) => {

const person = { name: { first: 'Joe' } }

expect(person).to.deep.equal(

{ name: { first: 'Anna' } })

})

Chai assertion shows good object difference

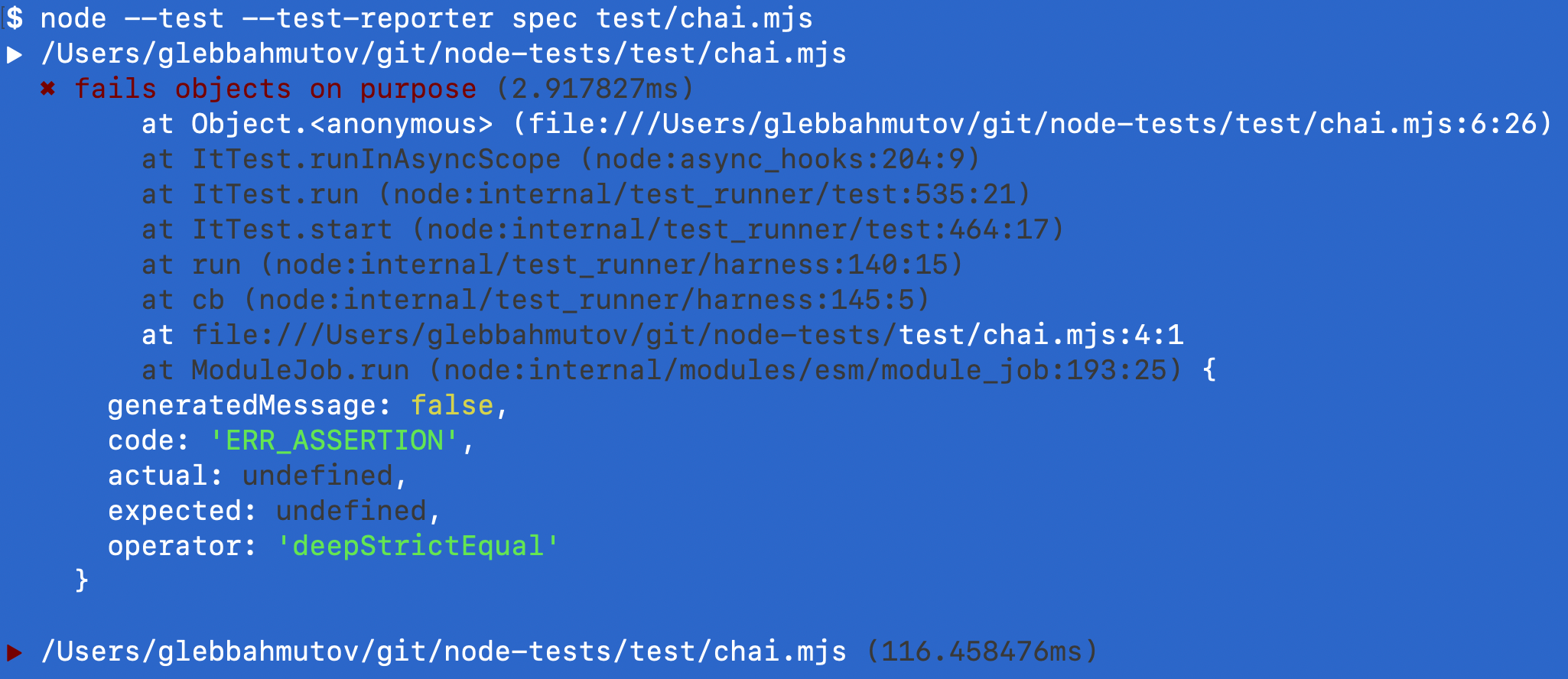

You can use Chai assertions with node:test but the error is still incomplete and is missing any useful information

import { it } from 'node:test'

import { expect } from 'chai'

it('fails objects on purpose', () => {

const person = { name: { first: 'Joe' } }

expect(person).to.deep.equal({ name: { first: 'Anna' } })

})

node:test plus Chai object assertion

I think the assertion messages are the weakest link the node:test today compared to other test runners.

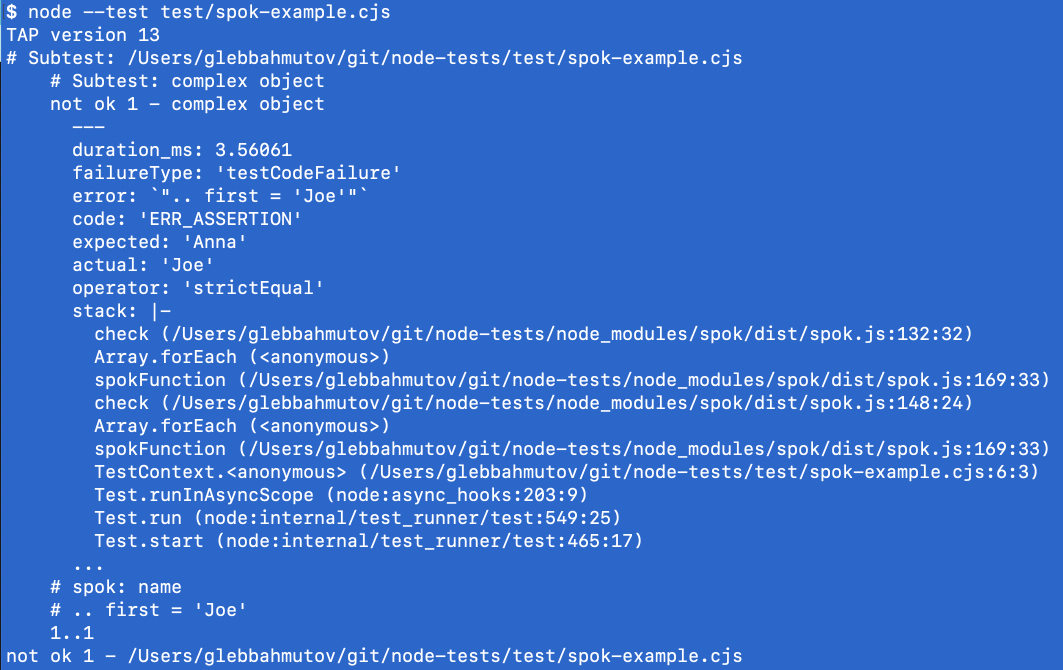

spok assertions

My favorite assertion library for checking objects is spok. It now works with node:test

const test = require('node:test')

const spok = require('spok').default

test('complex object', (t) => {

const person = { name: { first: 'Joe' } }

spok(t, person, { name: { first: 'Anna' } })

})

The failed assertion is less than ideal, but does show passing and failing predicates

node:test plus spok object assertion

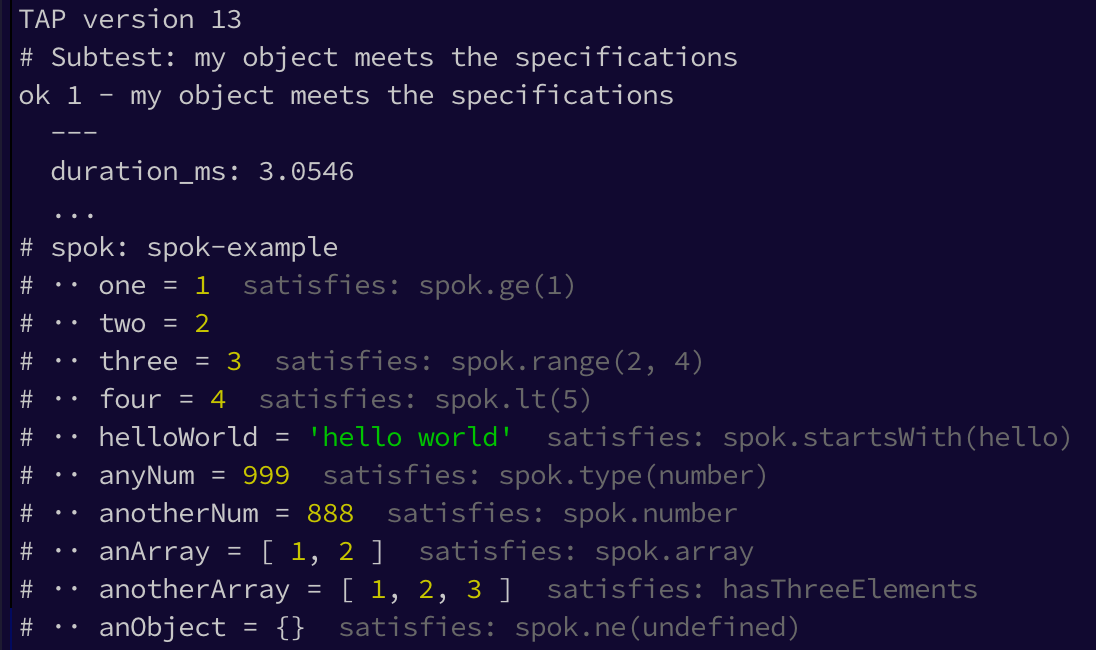

Tip: I love spok because it allows comparing the values and types and general predicates for each property in large objects. Here is a typical test and its terminal output.

// https://github.com/thlorenz/spok

test('my object meets the specifications', (t) => {

spok(t, object, {

$topic : 'spok-example'

, one : spok.ge(1)

, two : 2

, three : spok.range(2, 4)

, four : spok.lt(5)

, helloWorld : spok.startsWith('hello')

, anyNum : spok.type('number')

, anotherNum : spok.number

, anArray : spok.array

, anotherArray : hasThreeElements

, anObject : spok.ne(undefined)

})

})

spok assertion library output

This is why I constantly use spok in my Cypress tests via cy-spok plugin.

Test filtering #

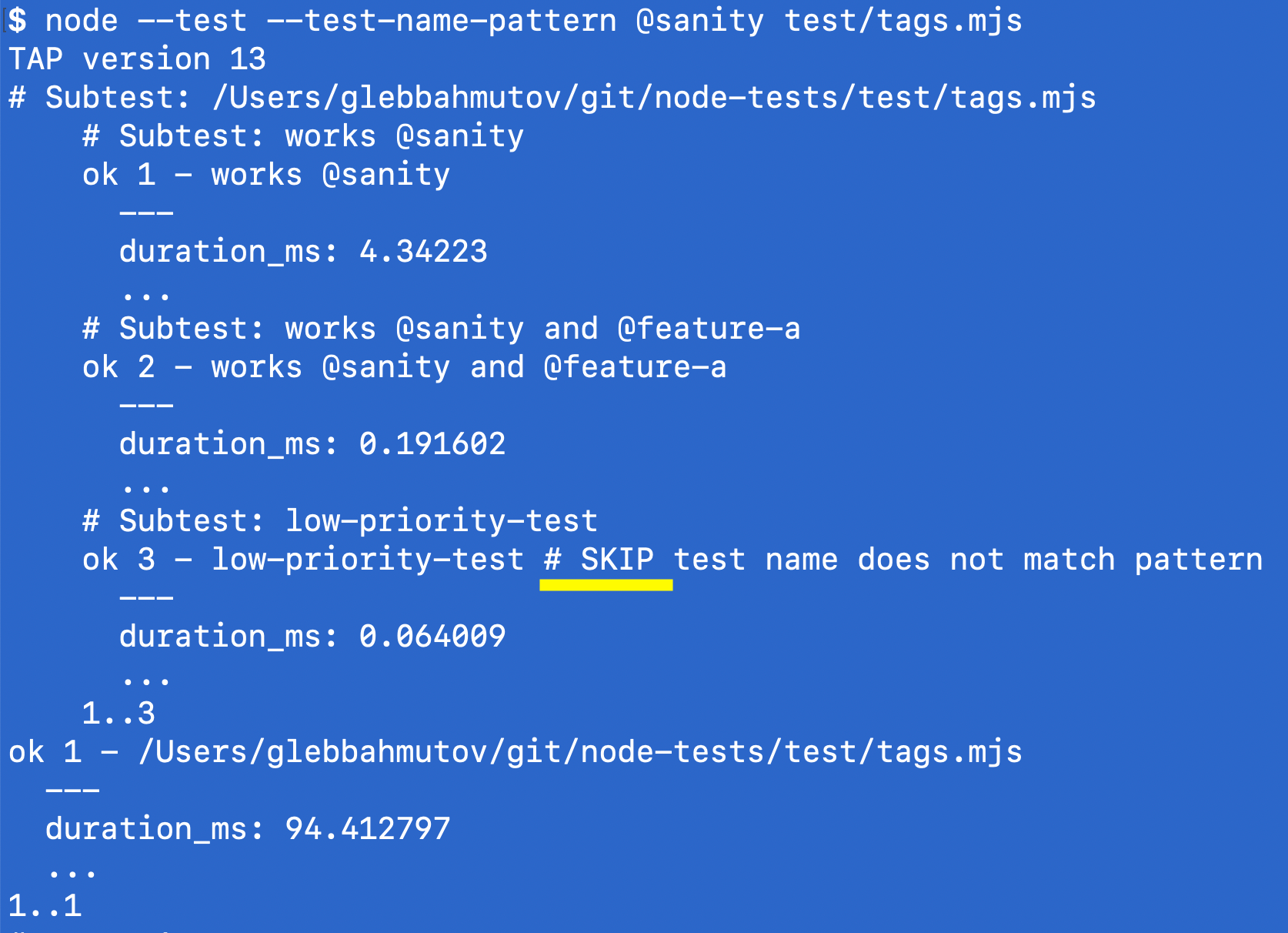

You can pick the test to run by part of its title using --test-name-pattern argument, skipping all other tests. If you have three tests with these names:

it('works @sanity', () => {

assert.equal(1, 1)

})

it('works @sanity and @feature-a', () => {

assert.equal(2, 2)

})

it('low-priority-test', () => {

assert.equal(3, 3)

})

Then you can run the two tests with the string @sanity in their titles using

# run tests with "@sanity" in the title

$ node --test --test-name-pattern @sanity

It is a little unclear which tests were skipped, and all files are reported, there is no "pre-filtering" of specs

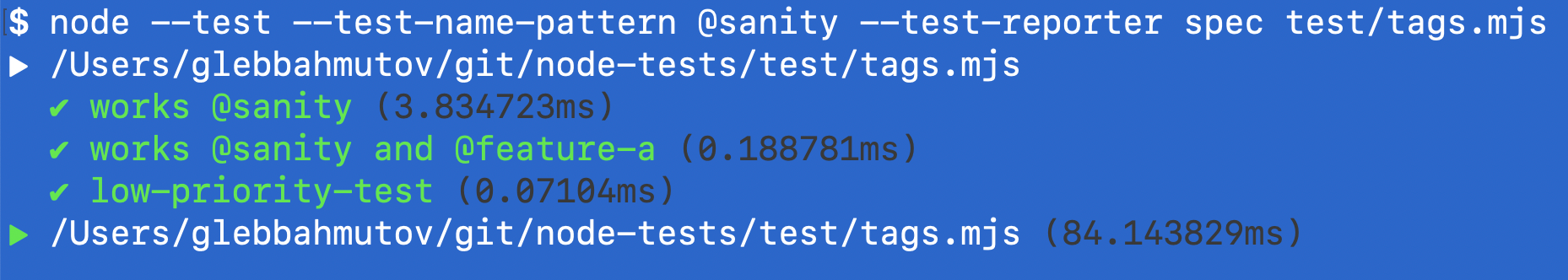

Filtering tests by the title text

For example, if we use the spec test reporter, it just reports all the tests, without any indication that some of the tests were skipped

No sign that some tests were filtered when using the spec reporter

You can run tests with titles matching one of several variants by repeating the --test-name-pattern argument.

# run tests with "@sanity" or "@feature"

$ node --test --test-name-pattern @sanity \

--test-name-pattern @feature

Continuous integration #

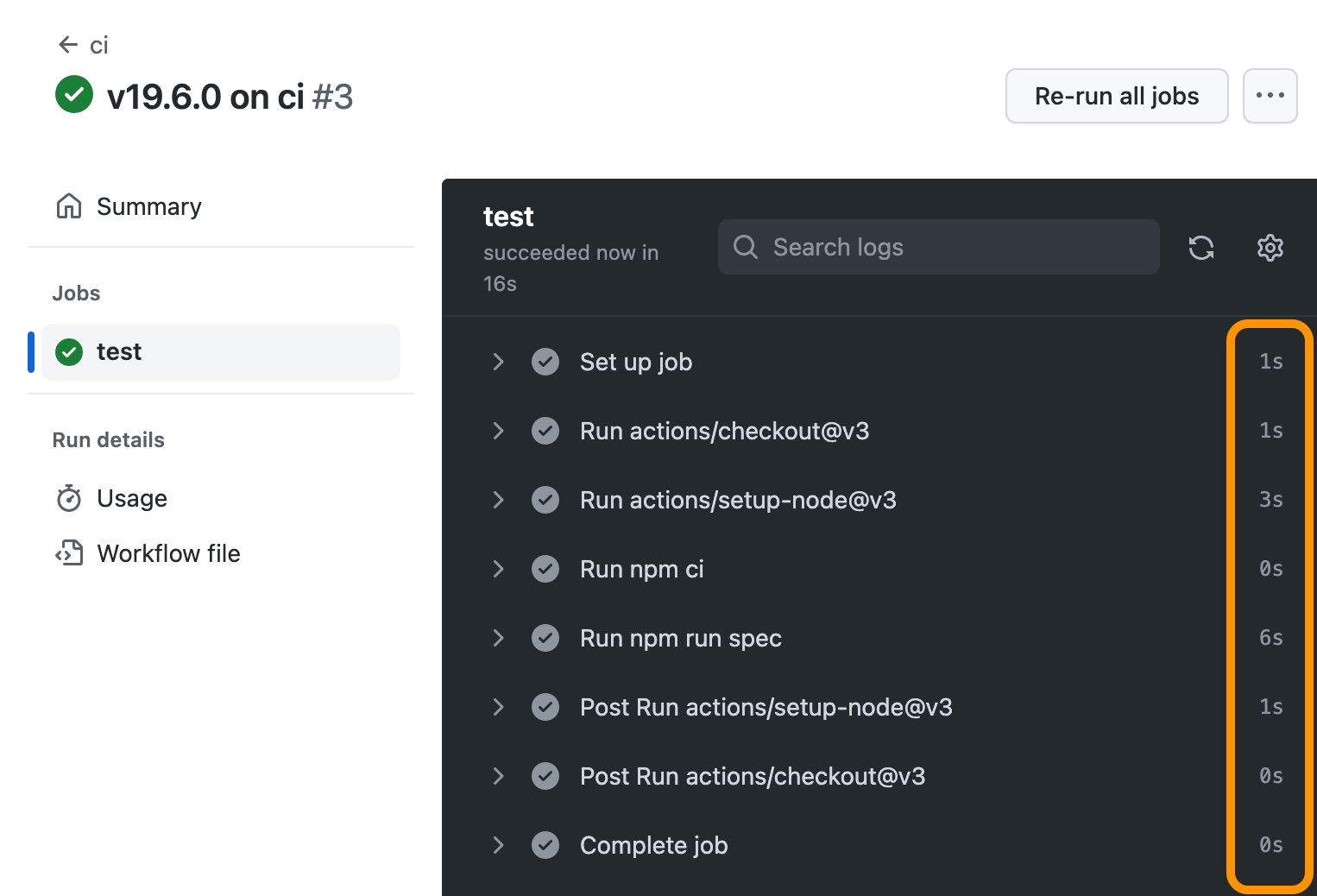

Running node:test on CI is really simple - there is nothing to install, just need to use the right Node version. If you are using GitHub Actions the simplest workflow could be:

name: ci

on: push

jobs:

name: test

steps:

- uses: actions/checkout@v3

# https://github.com/actions/setup-node

- uses: actions/setup-node@v3

with:

node-version: 19.6.0

cache: 'npm'

- run: npm ci

- run: npm run spec

The node tests run very quickly on GitHub Actions

Spies and stubs #

Spying on method calls and changing their behavior during tests is an important feature to have for any testing system. For example, if we want to change the return of the method person.name() we would stub it in our test

const person = {

name () {

return 'Joe'

}

}

stub(person, 'name').return('Anna')

The Sinon.js is the most popular and powerful JavaScript library for spying and mocking methods in my opinion. The new node:test module includes spying and stubbing API that is pretty good.

import { it, mock } from 'node:test'

import assert from 'node:assert/strict'

it('returns name', () => {

const person = {

name() {

return 'Joe'

},

}

// the real behavior

assert.equal(person.name(), 'Joe')

// mock the method "person.name"

mock.method(person, 'name', () => 'Anna')

assert.equal(person.name(), 'Anna')

// confirm the method calls

assert.equal(person.name.mock.calls.length, 1)

// restore the original method

person.name.mock.restore()

assert.equal(person.name(), 'Joe')

})

The API is powerful, but the assertions are pretty verbose and it is harder to write method stubs that resolve asynchronously. It is a long way from Sinon.js + Chai-Sinon combination.

Mocking ESM modules

If you need to mock ES6 import and export directives, you will need to bring in a separate module loader. Let's take an example with 3 files: math.mjs, calculator.mjs, and the spec file.

// math.mjs

export const add = (a, b) => {

console.log('adding %d to %d', a, b)

return a + b

}

// calculator.mjs

import { add } from './math.mjs'

export const calculate = (op, a, b) => {

return op === '+' ? add(a, b) : NaN

}

// the spec file

import { it } from 'node:test'

import assert from 'node:assert/strict'

import { calculate } from './calculator.mjs'

it('adds two numbers', () => {

assert.equal(calculate('+', 2, 3), 5)

})

By default, the test calls calculate which calls the real add function from math.mjs. If you want to mock the exported add function as the calculator.mjs sees it, then you need to bring something like esmock loader

// the spec file

import { it } from 'node:test'

import assert from 'node:assert/strict'

import esmock from 'esmock'

it('adds two numbers (mocks add)', async () => {

const { calculate } = await esmock('./calculator.mjs', {

'./math.mjs': {

add: () => 20,

},

})

assert.equal(calculate('+', 2, 3), 20)

})

Notice that we now import the calculator.mjs module inside the test to be able to change its behavior. We can even construct the math.mjs add export using the node:test mocks

import { it, mock } from 'node:test'

import assert from 'node:assert/strict'

import esmock from 'esmock'

it('adds two numbers (confirm call)', async () => {

const add = mock.fn(() => 20)

const { calculate } = await esmock('./calculator.mjs', {

'./math.mjs': {

add,

},

})

assert.equal(calculate('+', 2, 3), 20)

assert.deepEqual(add.mock.calls[0].arguments, [2, 3])

})

TypeScript #

To write specs using TypeScript or unit test TS files, node:test relies on 3rd party source file loaders, like ts-node.

{

"scripts": {

"ts-test": "node --test --loader ts-node/esm test/**/*.ts"

}

}

Let's write a TS spec

import { it } from 'node:test'

import assert from 'node:assert/strict'

type Person = {

name: string

}

it('subtest 1', () => {

console.log('testing the person')

const p: Person = {

name: 'Joe',

}

assert.deepEqual(p, { name: 'Joe' })

})

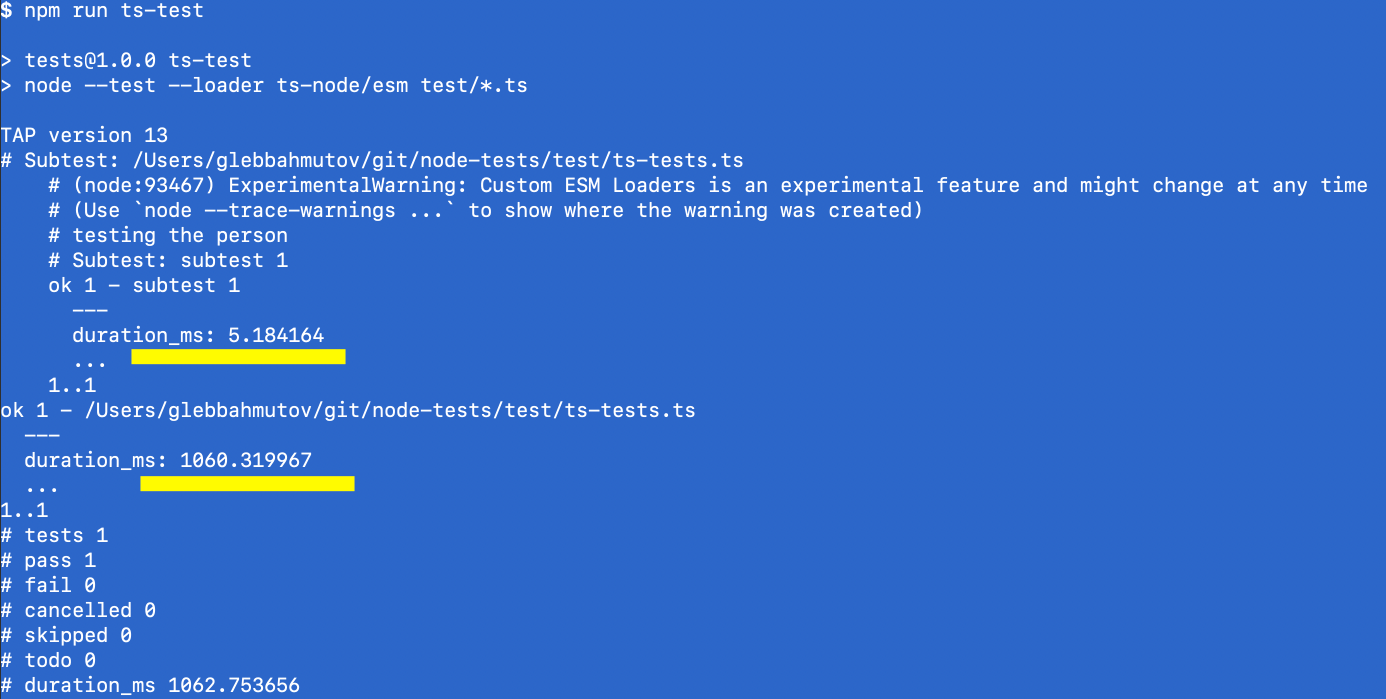

If you run the test, notice the duration. The test is very short: 5ms, but the total test run takes 1 second.

TypeScript tests are much slower

Loading and transpiling TypeScript code adds overhead. Without TS, the same test would take 100ms total.

Miscellaneous features #

Timeouts

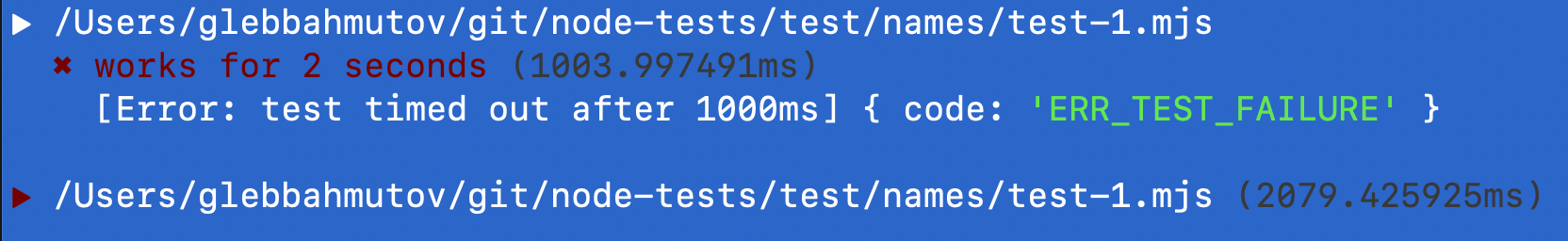

You can specify a timeout limit for each test

it('works for 2 seconds', { timeout: 1000 }, async () => {

await delay(2000)

})

Test timeout of 1 second exceeded

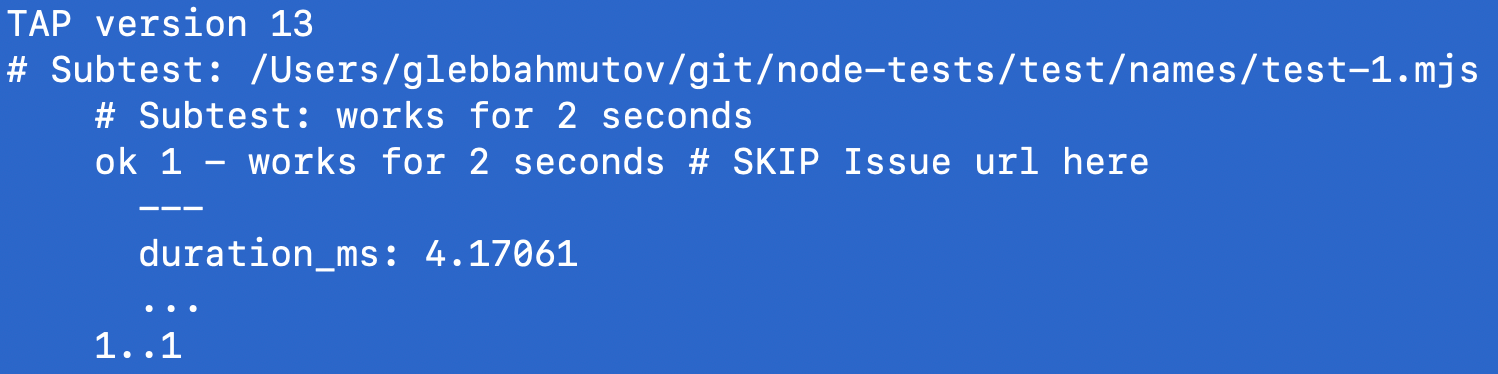

Named skip

You can give a reason for a test to be skipped.

// instead of this:

// SKIP: reason url

it('works for 2 seconds', ...)

// do this

it('works for 2 seconds', { skip: 'Issue url here' }, ...)

Shows reason for the skipped test

Debugging

I used VSCode to run the tests using the following launch configuration

{

"version": "0.2.0",

"configurations": [

{

"type": "node",

"request": "launch",

"name": "Unit test",

"skipFiles": ["<node_internals>/**"],

"program": "${file}",

"args": ["--test"]

}

]

}

I could then launch the debugging session and step through the tests and the code

Pause inside the test

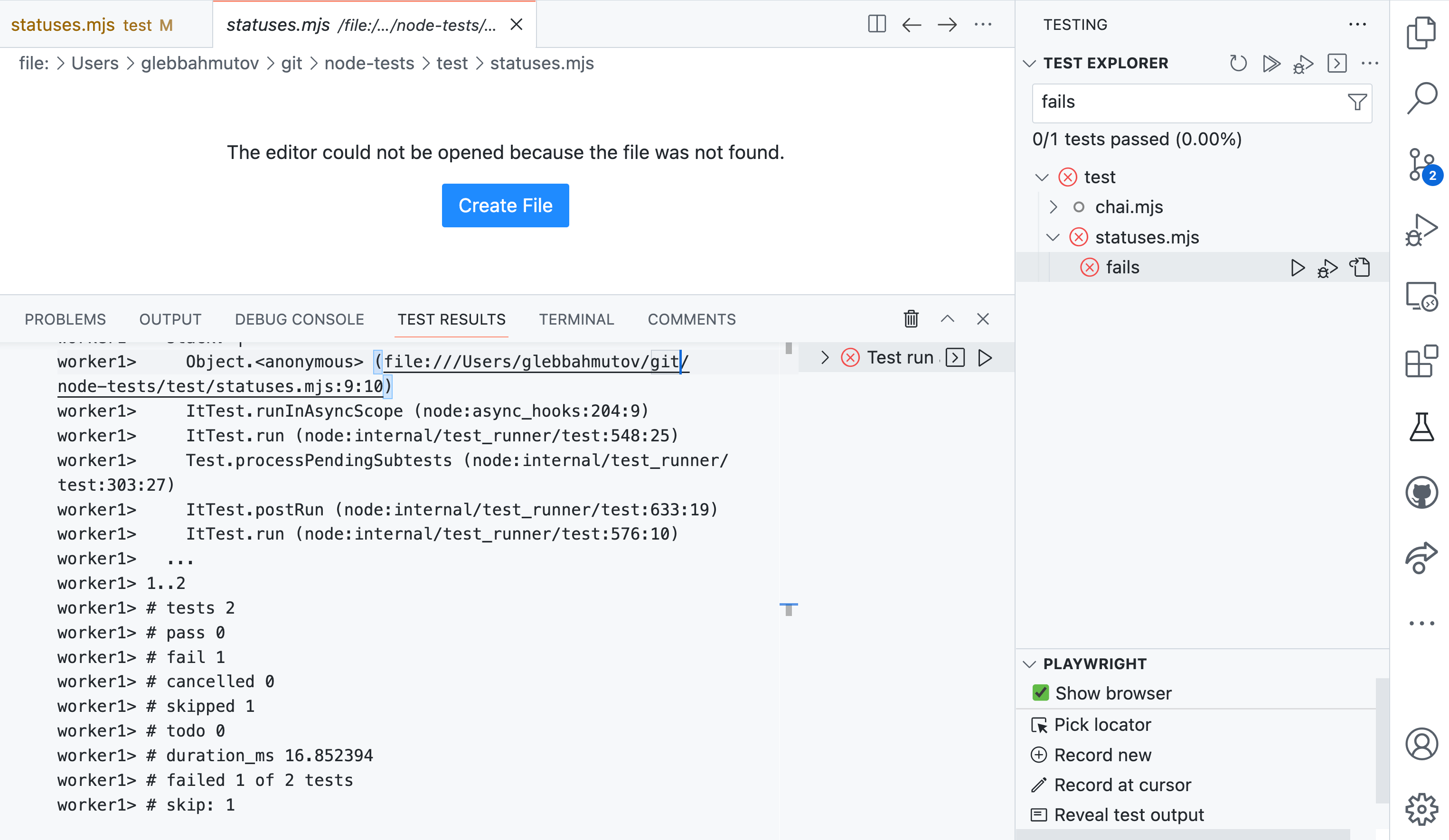

But the test output was ... sub-optimal. I could not even see any error messages from the failed assertions. I tried node:test runner VSCode extension and could get some output from the spec file and summary, but I must say it was underwhelming.

VSCode node test extension

Only test #

A weird feature that seems to be a poor substitute for test tags

it('works for 2 seconds', { only: true }, async () => {

await delay(2000)

})

it('fails', () => {

throw new Error('Nope')

})

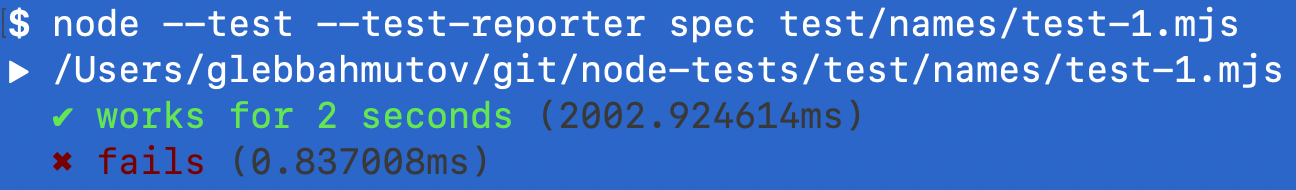

By default both tests run.

Both tests execute

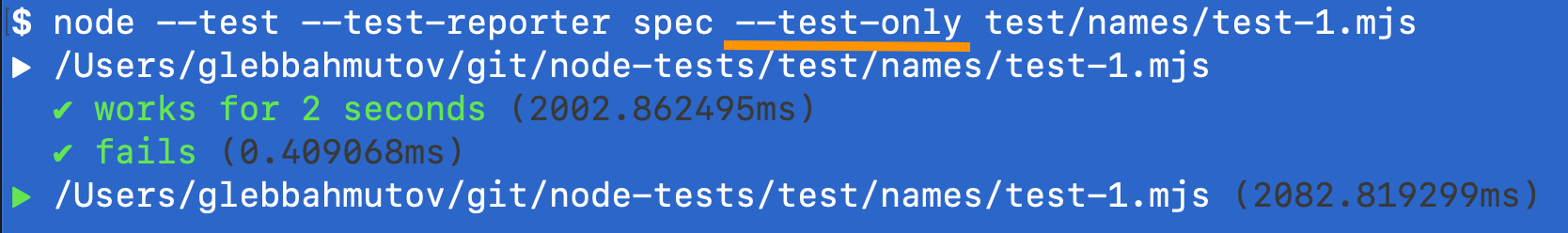

If you run tests with --test-only flag, then the second test without only: true option is skipped (silently)

Only the tests with only: true option execute

I would rather prefer to have it.only to run exclusive tests.

Done callback

You can call the callback argument usually called done to signal the end to the test

it('succeeds', (done) => {

setTimeout(done, 1000)

})

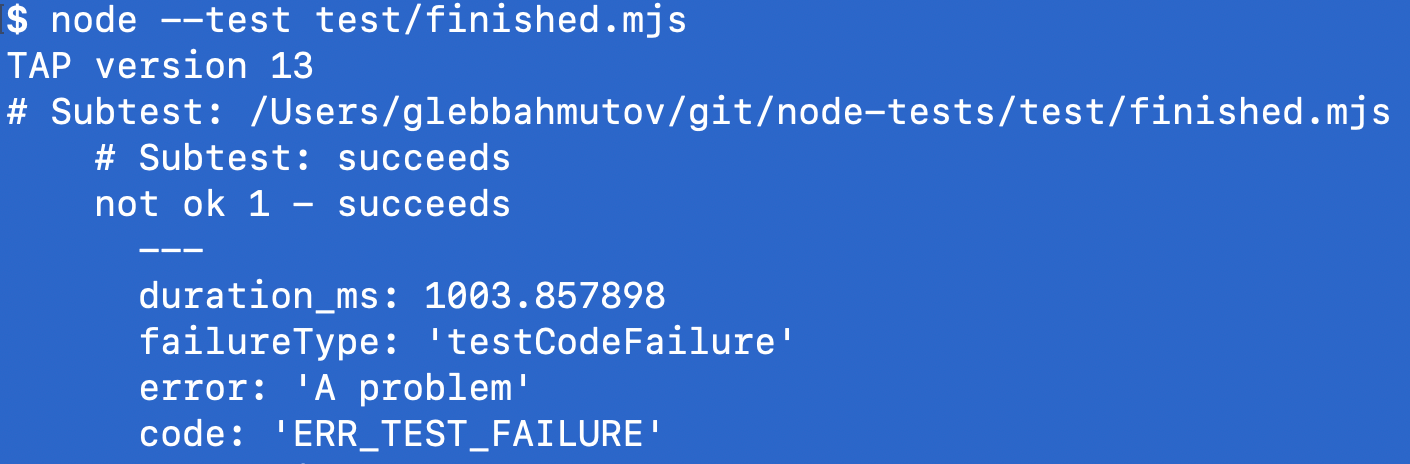

If you call done with an Error object, the test fails.

it('succeeds', (done) => {

setTimeout(() => {

done(new Error('A problem'))

}, 1000)

})

Fail the test by calling done with an error argument

Dynamic tests #

You can generate new tests while the tests are running. For example, you can fetch data and for each returned item generate its own test.

import test from 'node:test'

import assert from 'node:assert/strict'

async function fetchTestData() {

return new Promise((resolve) => {

setTimeout(() => {

resolve(['first', 'second', 'third'])

}, 1000)

})

}

test('generated', async (t) => {

const items = await fetchTestData()

for (const item of items) {

await t.test(`test ${item}`, (t) => {

assert.strictEqual(1, 1)

})

}

})

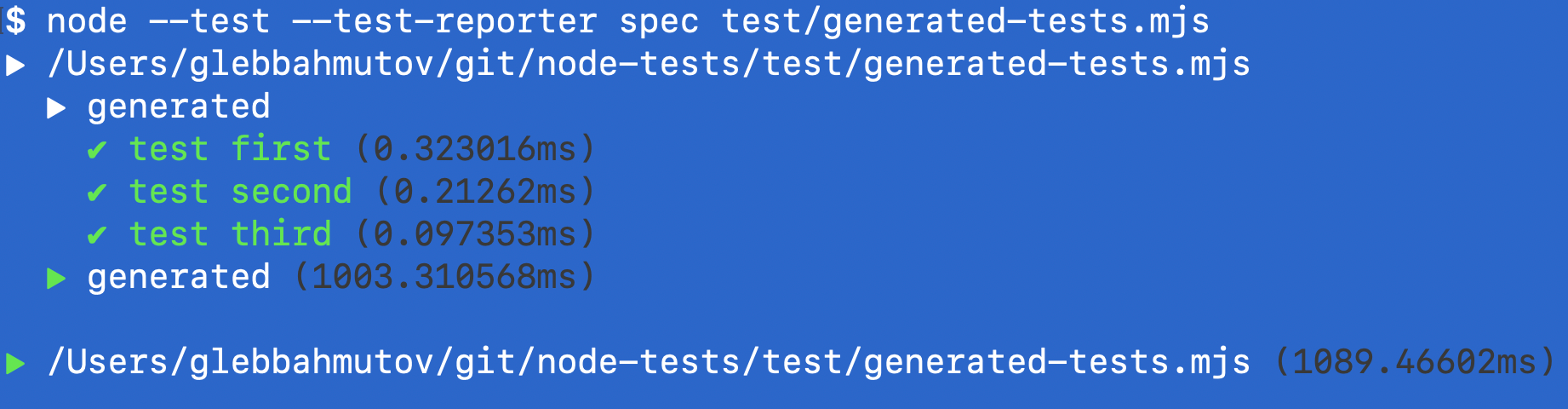

Generates three tests from three items

Code coverage #

The node test runner can and will benefit from the built-in code coverage added to Node. Imagine testing math functions again

// math.mjs

export const add = (a, b) => {

console.log('adding %d to %d', a, b)

return a + b

}

export const sub = (a, b) => {

console.log('%d - %d', a, b)

return a - b

}

// spec file

import { it } from 'node:test'

import assert from 'node:assert/strict'

import { calculate } from './calculator.mjs'

it('adds two numbers', () => {

assert.strictEqual(calculate('+', 2, 3), 5, '2+3')

})

If you run the tests with --experimental-test-coverage command line, the test summary includes the lines covered numbers

Missing features #

Here are a few features that are present in other test runners, but not in node:test

- the number of planned assertions like Ava's

t.plan(2) - mocking clock and timers like Jest's

jest.useFakeTimers() - exit on first failure

deno test --fail-fast - expect a failure like in this Ava's example

test.failing('found a bug', t => {

// Test will count as passed

t.fail()

})

Summary #

| Feature | Mocha | Ava | Jest | Node.js TR | My rating |

|---|---|---|---|---|---|

| Included with Node | 🚫 | 🚫 | 🚫 | ✅ | 🎉 |

| Watch mode | ✅ | ✅ | ✅ | ✅ | 🎉 |

| Reporters | lots | via TAP | lots | via TAP | |

| Assertions | via Chai ✅ | ✅ | ✅ | weak | 😑 |

| Snapshots | 🚫 | ✅ | ✅ | 🚫 | |

| Hooks | ✅ | ✅ | ✅ | ✅ | |

| grep support | ✅ | ✅ | ✅ | ✅ | |

| spy and stub | via Sinon ✅ | via Sinon ✅ | ✅✅ | ✅ | |

| parallel execution | ✅ | ✅ | ✅ | ✅ | |

| code coverage | via nyc | via c8 | ✅ | ✅ | 👍 |

| TS support | via ts-node | via ts-node | via ts-jest | via ts-node | 🐢 |

In general, I would recommend:

- trying

node --teston new smaller projects you might start - do not port any existing projects with already existing tests

- re-evaluate in 6 months because

node:testis evolving fast

Happy node --testing!

Recommend

About Joyk

Aggregate valuable and interesting links.

Joyk means Joy of geeK